Carl Cheng

Architectural Resilience to Foreground-and-Background Adversarial Noise

Mar 23, 2020

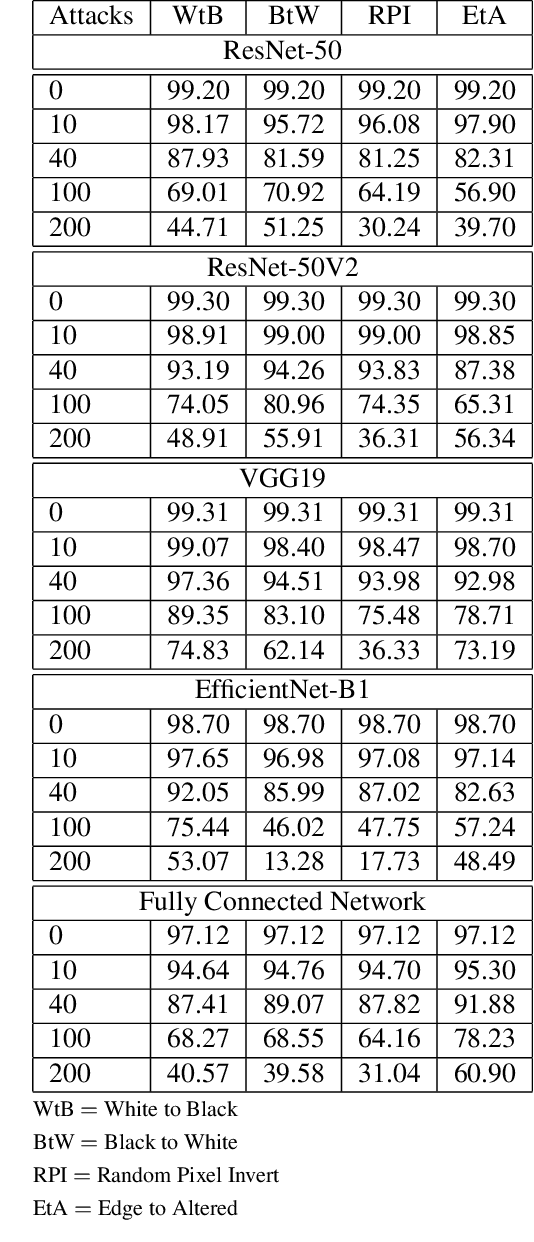

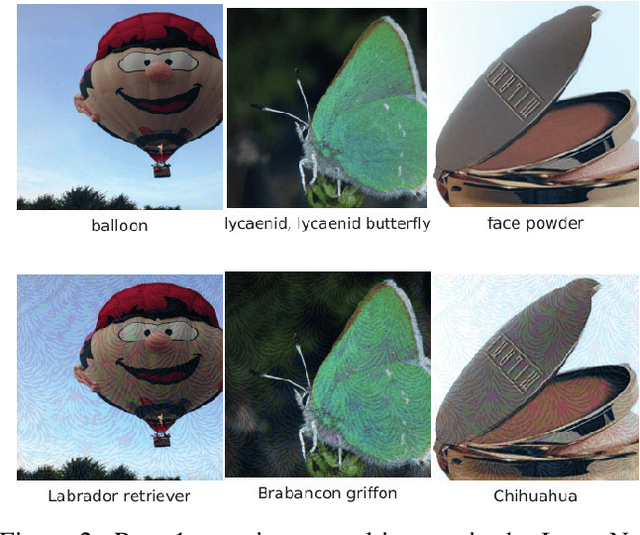

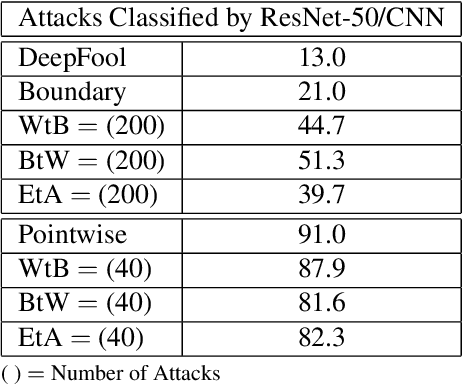

Abstract:Adversarial attacks in the form of imperceptible perturbations of normal images have been extensively studied, and for every new defense methodology created, multiple adversarial attacks are found to counteract it. In particular, a popular style of attack, exemplified in recent years by DeepFool and Carlini-Wagner, relies solely on white-box scenarios in which full access to the predictive model and its weights are required. In this work, we instead propose distinct model-agnostic benchmark perturbations of images in order to investigate the resilience and robustness of different network architectures. Results empirically determine that increasing depth within most types of Convolutional Neural Networks typically improves model resilience towards general attacks, with improvement steadily decreasing as the model becomes deeper. Additionally, we find that a notable difference in adversarial robustness exists between residual architectures with skip connections and non-residual architectures of similar complexity. Our findings provide direction for future understanding of residual connections and depth on network robustness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge