Cara Su-Yi Leong

Testing learning hypotheses using neural networks by manipulating learning data

Jul 05, 2024

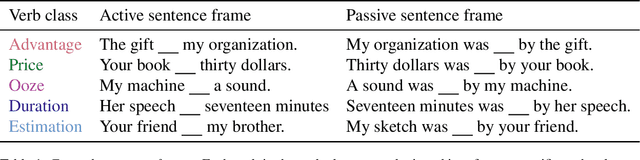

Abstract:Although passivization is productive in English, it is not completely general -- some exceptions exist (e.g. *One hour was lasted by the meeting). How do English speakers learn these exceptions to an otherwise general pattern? Using neural network language models as theories of acquisition, we explore the sources of indirect evidence that a learner can leverage to learn whether a verb can passivize. We first characterize English speakers' judgments of exceptions to the passive, confirming that speakers find some verbs more passivizable than others. We then show that a neural network language model can learn restrictions to the passive that are similar to those displayed by humans, suggesting that evidence for these exceptions is available in the linguistic input. We test the causal role of two hypotheses for how the language model learns these restrictions by training models on modified training corpora, which we create by altering the existing training corpora to remove features of the input implicated by each hypothesis. We find that while the frequency with which a verb appears in the passive significantly affects its passivizability, the semantics of the verb does not. This study highlight the utility of altering a language model's training data for answering questions where complete control over a learner's input is vital.

Language Models Can Learn Exceptions to Syntactic Rules

Jun 09, 2023Abstract:Artificial neural networks can generalize productively to novel contexts. Can they also learn exceptions to those productive rules? We explore this question using the case of restrictions on English passivization (e.g., the fact that "The vacation lasted five days" is grammatical, but "*Five days was lasted by the vacation" is not). We collect human acceptability judgments for passive sentences with a range of verbs, and show that the probability distribution defined by GPT-2, a language model, matches the human judgments with high correlation. We also show that the relative acceptability of a verb in the active vs. passive voice is positively correlated with the relative frequency of its occurrence in those voices. These results provide preliminary support for the entrenchment hypothesis, according to which learners track and uses the distributional properties of their input to learn negative exceptions to rules. At the same time, this hypothesis fails to explain the magnitude of unpassivizability demonstrated by certain individual verbs, suggesting that other cues to exceptionality are available in the linguistic input.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge