Bulent Abali

EFloat: Entropy-coded Floating Point Format for Deep Learning

Feb 04, 2021

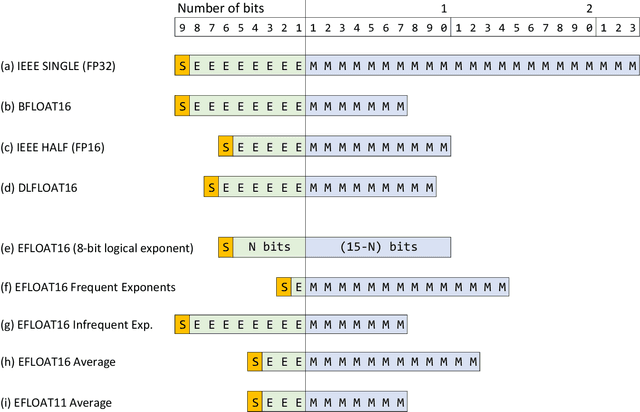

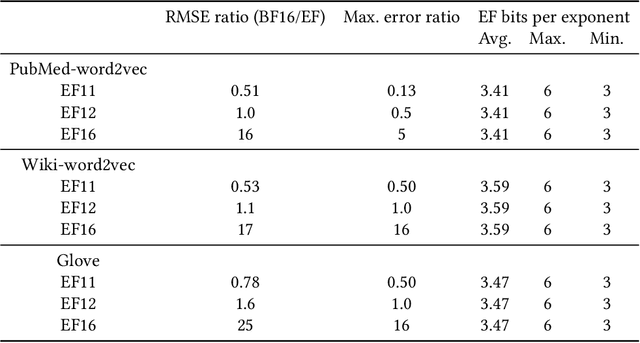

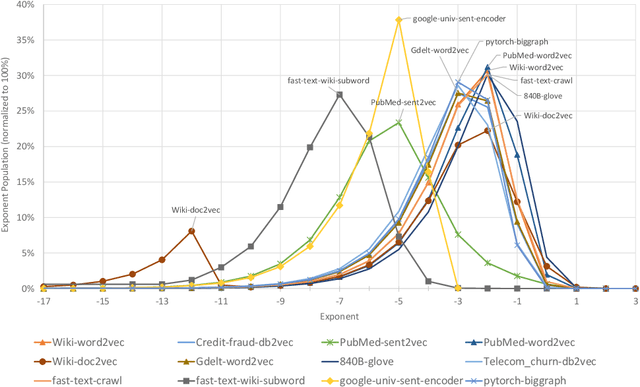

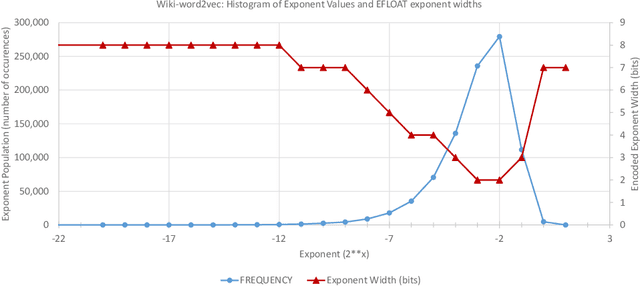

Abstract:We describe the EFloat floating-point number format with 4 to 6 additional bits of precision and a wider exponent range than the existing floating point (FP) formats of any width including FP32, BFloat16, IEEE-Half precision, DLFloat, TensorFloat, and 8-bit floats. In a large class of deep learning models we observe that FP exponent values tend to cluster around few unique values which presents entropy encoding opportunities. The EFloat format encodes frequent exponent values and signs with Huffman codes to minimize the average exponent field width. Saved bits then become available to the mantissa increasing the EFloat numeric precision on average by 4 to 6 bits compared to other FP formats of equal width. The proposed encoding concept may be beneficial to low-precision formats including 8-bit floats. Training deep learning models with low precision arithmetic is challenging. EFloat, with its increased precision may provide an opportunity for those tasks as well. We currently use the EFloat format for compressing and saving memory used in large NLP deep learning models. A potential hardware implementation for improving PCIe and memory bandwidth limitations of AI accelerators is also discussed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge