Benedikt Schulz

Postprocessing of Ensemble Weather Forecasts Using Permutation-invariant Neural Networks

Sep 08, 2023Abstract:Statistical postprocessing is used to translate ensembles of raw numerical weather forecasts into reliable probabilistic forecast distributions. In this study, we examine the use of permutation-invariant neural networks for this task. In contrast to previous approaches, which often operate on ensemble summary statistics and dismiss details of the ensemble distribution, we propose networks which treat forecast ensembles as a set of unordered member forecasts and learn link functions that are by design invariant to permutations of the member ordering. We evaluate the quality of the obtained forecast distributions in terms of calibration and sharpness, and compare the models against classical and neural network-based benchmark methods. In case studies addressing the postprocessing of surface temperature and wind gust forecasts, we demonstrate state-of-the-art prediction quality. To deepen the understanding of the learned inference process, we further propose a permutation-based importance analysis for ensemble-valued predictors, which highlights specific aspects of the ensemble forecast that are considered important by the trained postprocessing models. Our results suggest that most of the relevant information is contained in few ensemble-internal degrees of freedom, which may impact the design of future ensemble forecasting and postprocessing systems.

Aggregating distribution forecasts from deep ensembles

Apr 05, 2022

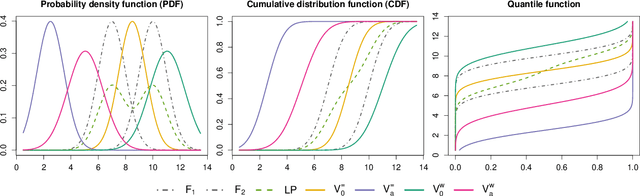

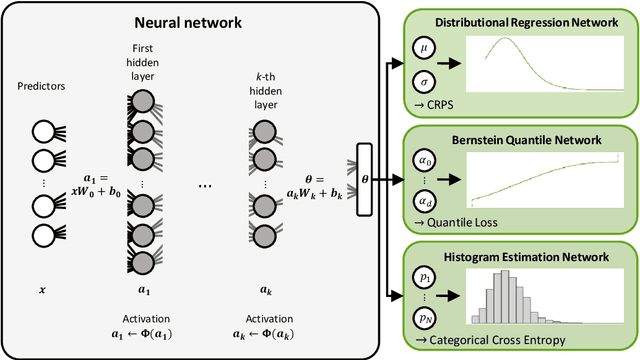

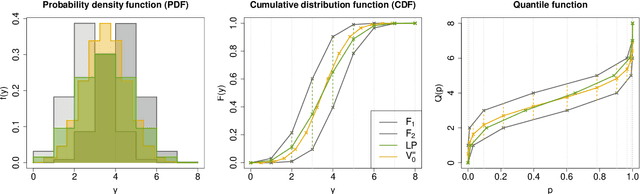

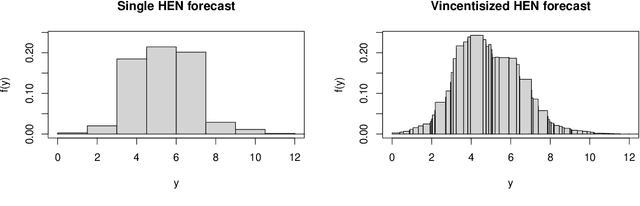

Abstract:The importance of accurately quantifying forecast uncertainty has motivated much recent research on probabilistic forecasting. In particular, a variety of deep learning approaches has been proposed, with forecast distributions obtained as output of neural networks. These neural network-based methods are often used in the form of an ensemble based on multiple model runs from different random initializations, resulting in a collection of forecast distributions that need to be aggregated into a final probabilistic prediction. With the aim of consolidating findings from the machine learning literature on ensemble methods and the statistical literature on forecast combination, we address the question of how to aggregate distribution forecasts based on such deep ensembles. Using theoretical arguments, simulation experiments and a case study on wind gust forecasting, we systematically compare probability- and quantile-based aggregation methods for three neural network-based approaches with different forecast distribution types as output. Our results show that combining forecast distributions can substantially improve the predictive performance. We propose a general quantile aggregation framework for deep ensembles that shows superior performance compared to a linear combination of the forecast densities. Finally, we investigate the effects of the ensemble size and derive recommendations of aggregating distribution forecasts from deep ensembles in practice.

Machine learning methods for postprocessing ensemble forecasts of wind gusts: A systematic comparison

Jun 17, 2021

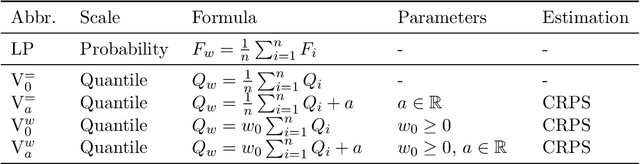

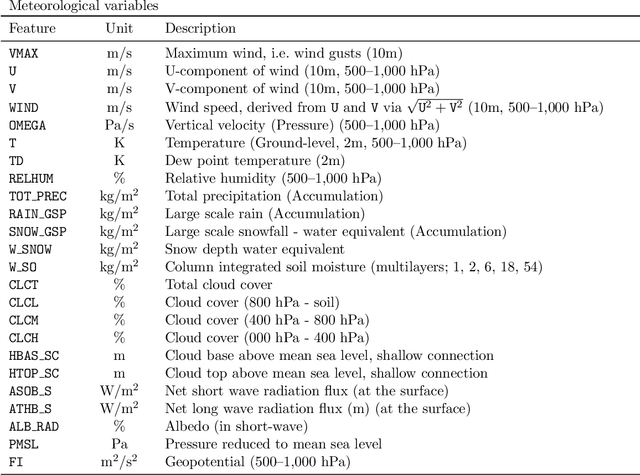

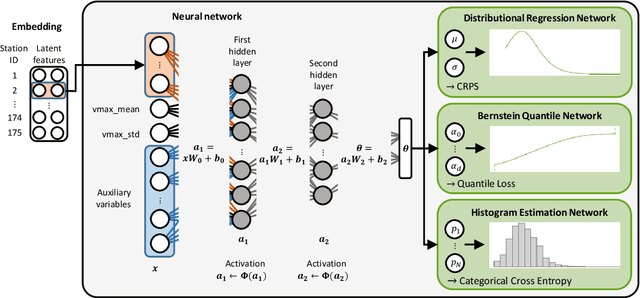

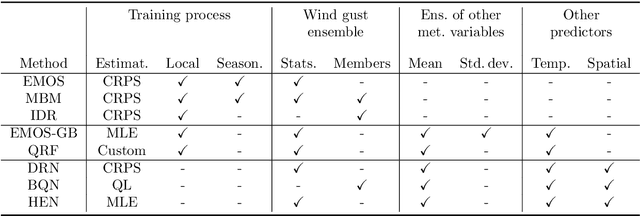

Abstract:Postprocessing ensemble weather predictions to correct systematic errors has become a standard practice in research and operations. However, only few recent studies have focused on ensemble postprocessing of wind gust forecasts, despite its importance for severe weather warnings. Here, we provide a comprehensive review and systematic comparison of eight statistical and machine learning methods for probabilistic wind gust forecasting via ensemble postprocessing, that can be divided in three groups: State of the art postprocessing techniques from statistics (ensemble model output statistics (EMOS), member-by-member postprocessing, isotonic distributional regression), established machine learning methods (gradient-boosting extended EMOS, quantile regression forests) and neural network-based approaches (distributional regression network, Bernstein quantile network, histogram estimation network). The methods are systematically compared using six years of data from a high-resolution, convection-permitting ensemble prediction system that was run operationally at the German weather service, and hourly observations at 175 surface weather stations in Germany. While all postprocessing methods yield calibrated forecasts and are able to correct the systematic errors of the raw ensemble predictions, incorporating information from additional meteorological predictor variables beyond wind gusts leads to significant improvements in forecast skill. In particular, we propose a flexible framework of locally adaptive neural networks with different probabilistic forecast types as output, which not only significantly outperform all benchmark postprocessing methods but also learn physically consistent relations associated with the diurnal cycle, especially the evening transition of the planetary boundary layer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge