Baobing Zhang

GETA-3DGS: Automatic Joint Structured Pruning and Quantization for 3D Gaussian Splatting

May 03, 2026Abstract:3D Gaussian splatting (3DGS) is a state-of-the-art representation for real-time photorealistic novel-view synthesis, yet a single high-fidelity scene typically occupies hundreds of megabytes to several gigabytes, exceeding the budgets of mobile, immersive, and volumetric video platforms. Existing 3DGS compression methods (e.g., HAC++, FlexGaussian, LP-3DGS) treat pruning, quantization, and entropy coding as separate stages and rely on hand-tuned heuristics (opacity thresholds, fixed bit-widths, SH truncation), limiting cross-scene generalization and preventing users from specifying a target rate or quality budget. We propose GETA-3DGS, to our knowledge the first end-to-end automatic joint structured pruning and quantization framework for 3DGS. Building on GETA for joint pruning-quantization of deep networks, we contribute: (i) a 3DGS-aware quantization-aware dependency graph (QADG) treating each Gaussian primitive as a group with five attribute sub-nodes and degree-aware SH sub-nodes; (ii) a render-aware saliency fusing transmittance-weighted contribution, screen-space gradient, and pixel coverage into a Gaussian-level importance score; and (iii) a heterogeneous per-attribute mixed-precision scheme co-optimized with structural sparsity under a projected partial saliency-guided (PPSG) descent guarantee. On Mip-NeRF 360, Tanks and Temples, and Deep Blending, GETA-3DGS operates directly on raw Gaussian primitives rather than a post-hoc anchor representation, delivering ~5x storage reduction over Vanilla 3DGS with no per-scene thresholds. Bit-width policy is the dominant rate-distortion lever: a uniform 6-bit cap costs up to -6.74 dB on view-dependent scenes versus our heterogeneous allocation, matching an information-theoretic reverse-water-filling analysis we develop. GETA-3DGS is complementary to existing codecs: entropy coding (HAC++, CompGS) is downstream, so the two can be composed.

An Automated Machine Learning Framework for Surgical Suturing Action Detection under Class Imbalance

Feb 10, 2025

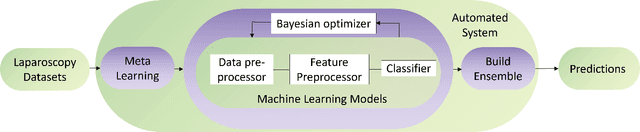

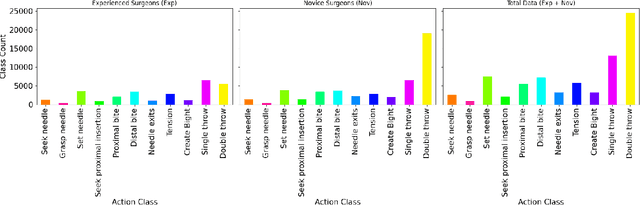

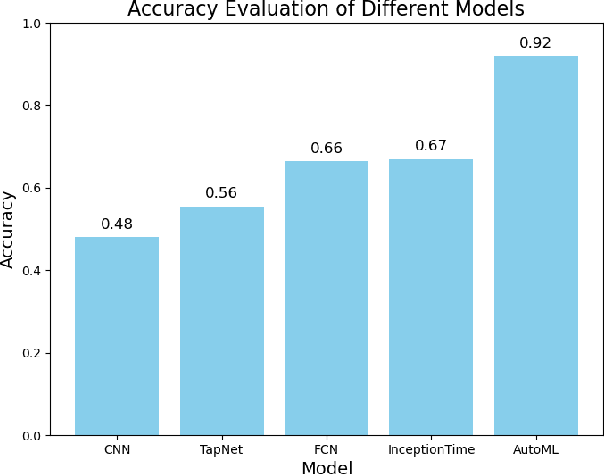

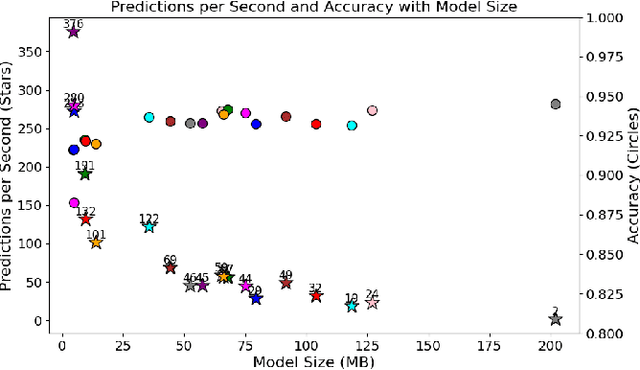

Abstract:In laparoscopy surgical training and evaluation, real-time detection of surgical actions with interpretable outputs is crucial for automated and real-time instructional feedback and skill development. Such capability would enable development of machine guided training systems. This paper presents a rapid deployment approach utilizing automated machine learning methods, based on surgical action data collected from both experienced and trainee surgeons. The proposed approach effectively tackles the challenge of highly imbalanced class distributions, ensuring robust predictions across varying skill levels of surgeons. Additionally, our method partially incorporates model transparency, addressing the reliability requirements in medical applications. Compared to deep learning approaches, traditional machine learning models not only facilitate efficient rapid deployment but also offer significant advantages in interpretability. Through experiments, this study demonstrates the potential of this approach to provide quick, reliable and effective real-time detection in surgical training environments

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge