Baktash Ansari

ToxiTwitch: Toward Emote-Aware Hybrid Moderation for Live Streaming Platforms

Jan 22, 2026Abstract:The rapid growth of live-streaming platforms such as Twitch has introduced complex challenges in moderating toxic behavior. Traditional moderation approaches, such as human annotation and keyword-based filtering, have demonstrated utility, but human moderators on Twitch constantly struggle to scale effectively in the fast-paced, high-volume, and context-rich chat environment of the platform while also facing harassment themselves. Recent advances in large language models (LLMs), such as DeepSeek-R1-Distill and Llama-3-8B-Instruct, offer new opportunities for toxicity detection, especially in understanding nuanced, multimodal communication involving emotes. In this work, we present an exploratory comparison of toxicity detection approaches tailored to Twitch. Our analysis reveals that incorporating emotes improves the detection of toxic behavior. To this end, we introduce ToxiTwitch, a hybrid model that combines LLM-generated embeddings of text and emotes with traditional machine learning classifiers, including Random Forest and SVM. In our case study, the proposed hybrid approach reaches up to 80 percent accuracy under channel-specific training (with 13 percent improvement over BERT and F1-score of 76 percent). This work is an exploratory study intended to surface challenges and limits of emote-aware toxicity detection on Twitch.

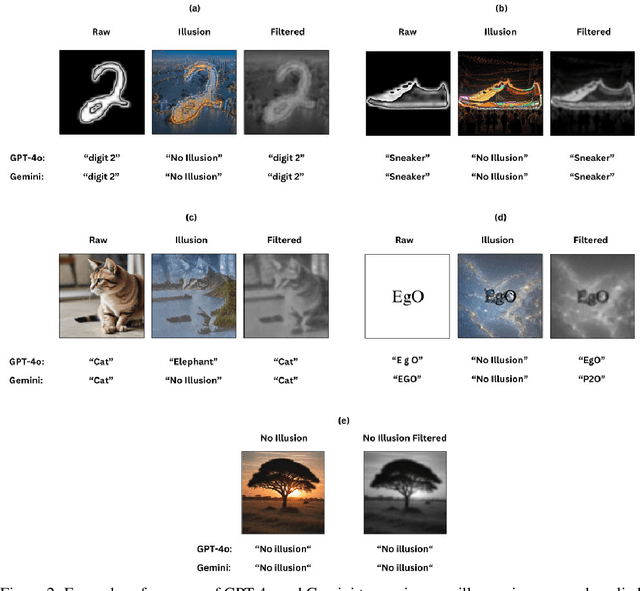

Illusory VQA: Benchmarking and Enhancing Multimodal Models on Visual Illusions

Dec 11, 2024

Abstract:In recent years, Visual Question Answering (VQA) has made significant strides, particularly with the advent of multimodal models that integrate vision and language understanding. However, existing VQA datasets often overlook the complexities introduced by image illusions, which pose unique challenges for both human perception and model interpretation. In this study, we introduce a novel task called Illusory VQA, along with four specialized datasets: IllusionMNIST, IllusionFashionMNIST, IllusionAnimals, and IllusionChar. These datasets are designed to evaluate the performance of state-of-the-art multimodal models in recognizing and interpreting visual illusions. We assess the zero-shot performance of various models, fine-tune selected models on our datasets, and propose a simple yet effective solution for illusion detection using Gaussian and blur low-pass filters. We show that this method increases the performance of models significantly and in the case of BLIP-2 on IllusionAnimals without any fine-tuning, it outperforms humans. Our findings highlight the disparity between human and model perception of illusions and demonstrate that fine-tuning and specific preprocessing techniques can significantly enhance model robustness. This work contributes to the development of more human-like visual understanding in multimodal models and suggests future directions for adapting filters using learnable parameters.

Bilingual Sexism Classification: Fine-Tuned XLM-RoBERTa and GPT-3.5 Few-Shot Learning

Jun 11, 2024Abstract:Sexism in online content is a pervasive issue that necessitates effective classification techniques to mitigate its harmful impact. Online platforms often have sexist comments and posts that create a hostile environment, especially for women and minority groups. This content not only spreads harmful stereotypes but also causes emotional harm. Reliable methods are essential to find and remove sexist content, making online spaces safer and more welcoming. Therefore, the sEXism Identification in Social neTworks (EXIST) challenge addresses this issue at CLEF 2024. This study aims to improve sexism identification in bilingual contexts (English and Spanish) by leveraging natural language processing models. The tasks are to determine whether a text is sexist and what the source intention behind it is. We fine-tuned the XLM-RoBERTa model and separately used GPT-3.5 with few-shot learning prompts to classify sexist content. The XLM-RoBERTa model exhibited robust performance in handling complex linguistic structures, while GPT-3.5's few-shot learning capability allowed for rapid adaptation to new data with minimal labeled examples. Our approach using XLM-RoBERTa achieved 4th place in the soft-soft evaluation of Task 1 (sexism identification). For Task 2 (source intention), we achieved 2nd place in the soft-soft evaluation.

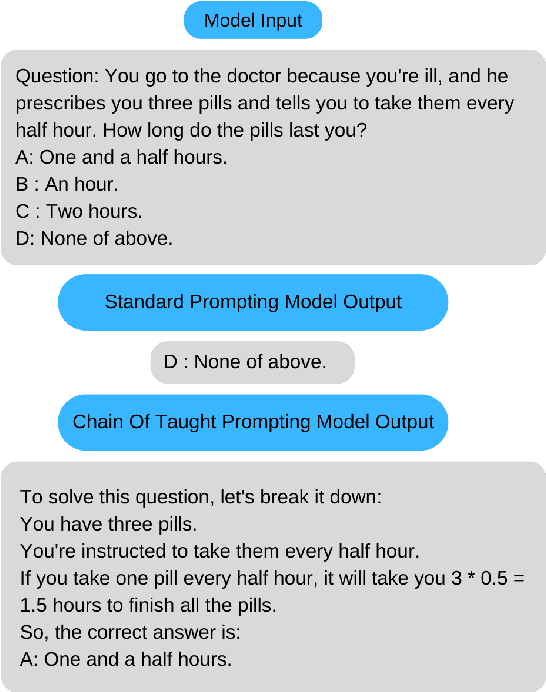

BAMO at SemEval-2024 Task 9: BRAINTEASER: A Novel Task Defying Common Sense

Jun 07, 2024

Abstract:This paper outlines our approach to SemEval 2024 Task 9, BRAINTEASER: A Novel Task Defying Common Sense. The task aims to evaluate the ability of language models to think creatively. The dataset comprises multi-choice questions that challenge models to think "outside of the box". We fine-tune 2 models, BERT and RoBERTa Large. Next, we employ a Chain of Thought (CoT) zero-shot prompting approach with 6 large language models, such as GPT-3.5, Mixtral, and Llama2. Finally, we utilize ReConcile, a technique that employs a "round table conference" approach with multiple agents for zero-shot learning, to generate consensus answers among 3 selected language models. Our best method achieves an overall accuracy of 85 percent on the sentence puzzles subtask.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge