Avinash Agarwal

Nishpaksh: TEC Standard-Compliant Framework for Fairness Auditing and Certification of AI Models

Jan 23, 2026Abstract:The growing reliance on Artificial Intelligence (AI) models in high-stakes decision-making systems, particularly within emerging telecom and 6G applications, underscores the urgent need for transparent and standardized fairness assessment frameworks. While global toolkits such as IBM AI Fairness 360 and Microsoft Fairlearn have advanced bias detection, they often lack alignment with region-specific regulatory requirements and national priorities. To address this gap, we propose Nishpaksh, an indigenous fairness evaluation tool that operationalizes the Telecommunication Engineering Centre (TEC) Standard for the Evaluation and Rating of Artificial Intelligence Systems. Nishpaksh integrates survey-based risk quantification, contextual threshold determination, and quantitative fairness evaluation into a unified, web-based dashboard. The tool employs vectorized computation, reactive state management, and certification-ready reporting to enable reproducible, audit-grade assessments, thereby addressing a critical post-standardization implementation need. Experimental validation on the COMPAS dataset demonstrates Nishpaksh's effectiveness in identifying attribute-specific bias and generating standardized fairness scores compliant with the TEC framework. The system bridges the gap between research-oriented fairness methodologies and regulatory AI governance in India, marking a significant step toward responsible and auditable AI deployment within critical infrastructure like telecommunications.

Incorporating AI Incident Reporting into Telecommunications Law and Policy: Insights from India

Sep 11, 2025Abstract:The integration of artificial intelligence (AI) into telecommunications infrastructure introduces novel risks, such as algorithmic bias and unpredictable system behavior, that fall outside the scope of traditional cybersecurity and data protection frameworks. This paper introduces a precise definition and a detailed typology of telecommunications AI incidents, establishing them as a distinct category of risk that extends beyond conventional cybersecurity and data protection breaches. It argues for their recognition as a distinct regulatory concern. Using India as a case study for jurisdictions that lack a horizontal AI law, the paper analyzes the country's key digital regulations. The analysis reveals that India's existing legal instruments, including the Telecommunications Act, 2023, the CERT-In Rules, and the Digital Personal Data Protection Act, 2023, focus on cybersecurity and data breaches, creating a significant regulatory gap for AI-specific operational incidents, such as performance degradation and algorithmic bias. The paper also examines structural barriers to disclosure and the limitations of existing AI incident repositories. Based on these findings, the paper proposes targeted policy recommendations centered on integrating AI incident reporting into India's existing telecom governance. Key proposals include mandating reporting for high-risk AI failures, designating an existing government body as a nodal agency to manage incident data, and developing standardized reporting frameworks. These recommendations aim to enhance regulatory clarity and strengthen long-term resilience, offering a pragmatic and replicable blueprint for other nations seeking to govern AI risks within their existing sectoral frameworks.

Enhancements for Developing a Comprehensive AI Fairness Assessment Standard

Apr 10, 2025Abstract:As AI systems increasingly influence critical sectors like telecommunications, finance, healthcare, and public services, ensuring fairness in decision-making is essential to prevent biased or unjust outcomes that disproportionately affect vulnerable entities or result in adverse impacts. This need is particularly pressing as the industry approaches the 6G era, where AI will drive complex functions like autonomous network management and hyper-personalized services. The TEC Standard for Fairness Assessment and Rating of AI Systems provides guidelines for evaluating fairness in AI, focusing primarily on tabular data and supervised learning models. However, as AI applications diversify, this standard requires enhancement to strengthen its impact and broaden its applicability. This paper proposes an expansion of the TEC Standard to include fairness assessments for images, unstructured text, and generative AI, including large language models, ensuring a more comprehensive approach that keeps pace with evolving AI technologies. By incorporating these dimensions, the enhanced framework will promote responsible and trustworthy AI deployment across various sectors.

* 5 pages. Published in 2025 17th International Conference on COMmunication Systems and NETworks (COMSNETS). Access: https://ieeexplore.ieee.org/abstract/document/10885551

Standardised schema and taxonomy for AI incident databases in critical digital infrastructure

Jan 28, 2025Abstract:The rapid deployment of Artificial Intelligence (AI) in critical digital infrastructure introduces significant risks, necessitating a robust framework for systematically collecting AI incident data to prevent future incidents. Existing databases lack the granularity as well as the standardized structure required for consistent data collection and analysis, impeding effective incident management. This work proposes a standardized schema and taxonomy for AI incident databases, addressing these challenges by enabling detailed and structured documentation of AI incidents across sectors. Key contributions include developing a unified schema, introducing new fields such as incident severity, causes, and harms caused, and proposing a taxonomy for classifying AI incidents in critical digital infrastructure. The proposed solution facilitates more effective incident data collection and analysis, thus supporting evidence-based policymaking, enhancing industry safety measures, and promoting transparency. This work lays the foundation for a coordinated global response to AI incidents, ensuring trust, safety, and accountability in using AI across regions.

A Seven-Layer Model for Standardising AI Fairness Assessment

Dec 21, 2022Abstract:Problem statement: Standardisation of AI fairness rules and benchmarks is challenging because AI fairness and other ethical requirements depend on multiple factors such as context, use case, type of the AI system, and so on. In this paper, we elaborate that the AI system is prone to biases at every stage of its lifecycle, from inception to its usage, and that all stages require due attention for mitigating AI bias. We need a standardised approach to handle AI fairness at every stage. Gap analysis: While AI fairness is a hot research topic, a holistic strategy for AI fairness is generally missing. Most researchers focus only on a few facets of AI model-building. Peer review shows excessive focus on biases in the datasets, fairness metrics, and algorithmic bias. In the process, other aspects affecting AI fairness get ignored. The solution proposed: We propose a comprehensive approach in the form of a novel seven-layer model, inspired by the Open System Interconnection (OSI) model, to standardise AI fairness handling. Despite the differences in the various aspects, most AI systems have similar model-building stages. The proposed model splits the AI system lifecycle into seven abstraction layers, each corresponding to a well-defined AI model-building or usage stage. We also provide checklists for each layer and deliberate on potential sources of bias in each layer and their mitigation methodologies. This work will facilitate layer-wise standardisation of AI fairness rules and benchmarking parameters.

Fairness Score and Process Standardization: Framework for Fairness Certification in Artificial Intelligence Systems

Jan 10, 2022

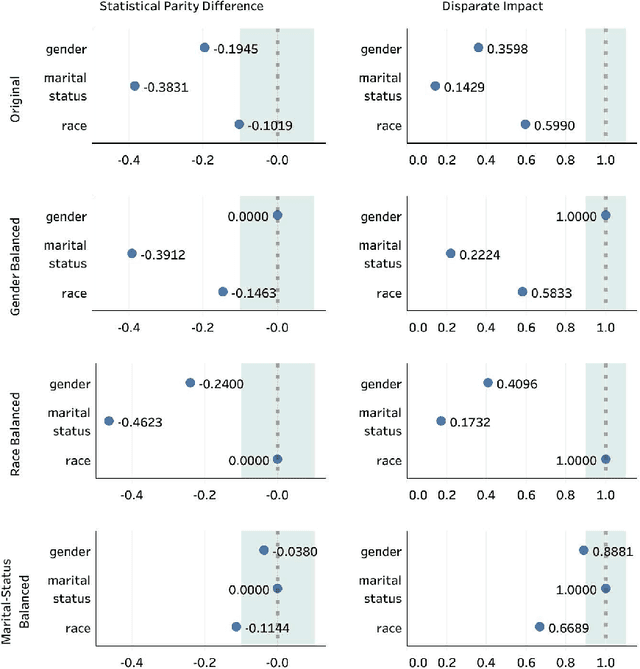

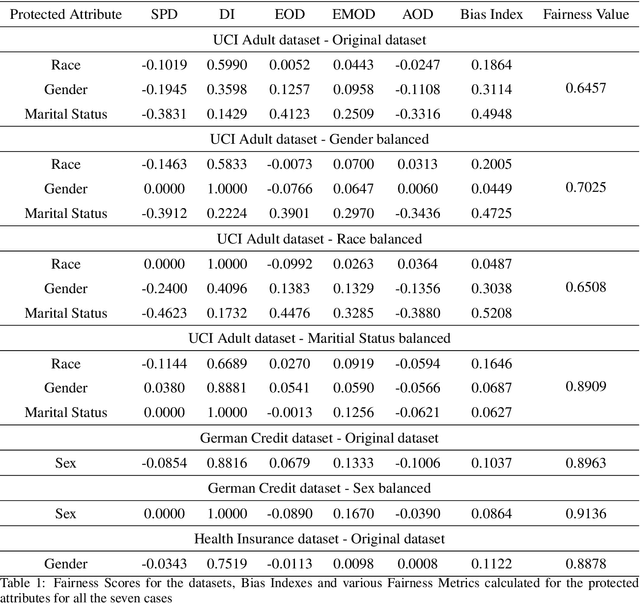

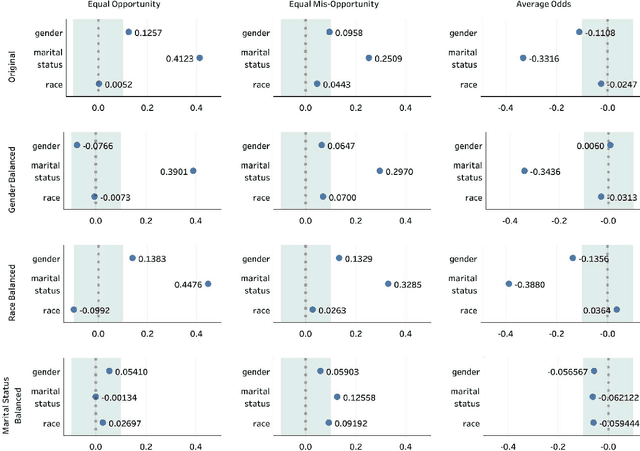

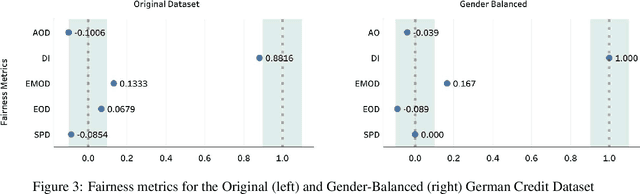

Abstract:Decisions made by various Artificial Intelligence (AI) systems greatly influence our day-to-day lives. With the increasing use of AI systems, it becomes crucial to know that they are fair, identify the underlying biases in their decision-making, and create a standardized framework to ascertain their fairness. In this paper, we propose a novel Fairness Score to measure the fairness of a data-driven AI system and a Standard Operating Procedure (SOP) for issuing Fairness Certification for such systems. Fairness Score and audit process standardization will ensure quality, reduce ambiguity, enable comparison and improve the trustworthiness of the AI systems. It will also provide a framework to operationalise the concept of fairness and facilitate the commercial deployment of such systems. Furthermore, a Fairness Certificate issued by a designated third-party auditing agency following the standardized process would boost the conviction of the organizations in the AI systems that they intend to deploy. The Bias Index proposed in this paper also reveals comparative bias amongst the various protected attributes within the dataset. To substantiate the proposed framework, we iteratively train a model on biased and unbiased data using multiple datasets and check that the Fairness Score and the proposed process correctly identify the biases and judge the fairness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge