Ankit Kumar Verma

Comparative Analysis of Resource-Efficient CNN Architectures for Brain Tumor Classification

Nov 23, 2024

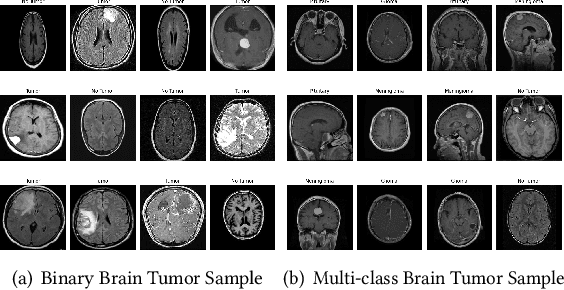

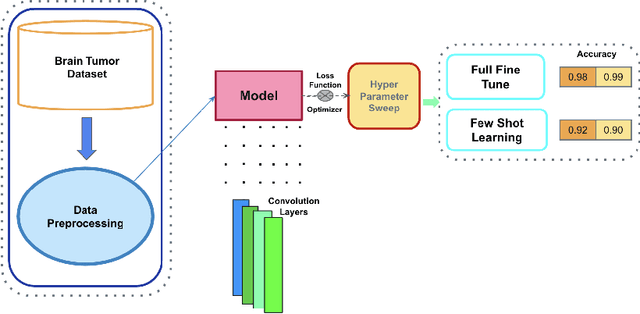

Abstract:Accurate brain tumor classification in MRI images is critical for timely diagnosis and treatment planning. While deep learning models like ResNet-18, VGG-16 have shown high accuracy, they often come with increased complexity and computational demands. This study presents a comparative analysis of effective yet simple Convolutional Neural Network (CNN) architecture and pre-trained ResNet18, and VGG16 model for brain tumor classification using two publicly available datasets: Br35H:: Brain Tumor Detection 2020 and Brain Tumor MRI Dataset. The custom CNN architecture, despite its lower complexity, demonstrates competitive performance with the pre-trained ResNet18 and VGG16 models. In binary classification tasks, the custom CNN achieved an accuracy of 98.67% on the Br35H dataset and 99.62% on the Brain Tumor MRI Dataset. For multi-class classification, the custom CNN, with a slight architectural modification, achieved an accuracy of 98.09%, on the Brain Tumor MRI Dataset. Comparatively, ResNet18 and VGG16 maintained high performance levels, but the custom CNNs provided a more computationally efficient alternative. Additionally,the custom CNNs were evaluated using few-shot learning (0, 5, 10, 15, 20, 40, and 80 shots) to assess their robustness, achieving notable accuracy improvements with increased shots. This study highlights the potential of well-designed, less complex CNN architectures as effective and computationally efficient alternatives to deeper, pre-trained models for medical imaging tasks, including brain tumor classification. This study underscores the potential of custom CNNs in medical imaging tasks and encourages further exploration in this direction.

Auditing Gender Analyzers on Text Data

Oct 09, 2023

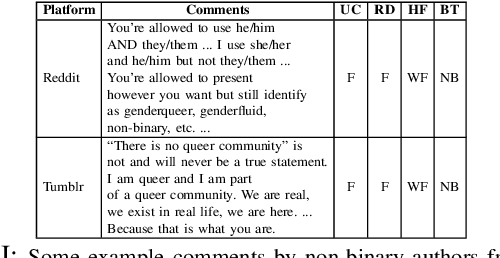

Abstract:AI models have become extremely popular and accessible to the general public. However, they are continuously under the scanner due to their demonstrable biases toward various sections of the society like people of color and non-binary people. In this study, we audit three existing gender analyzers -- uClassify, Readable and HackerFactor, for biases against non-binary individuals. These tools are designed to predict only the cisgender binary labels, which leads to discrimination against non-binary members of the society. We curate two datasets -- Reddit comments (660k) and, Tumblr posts (2.05M) and our experimental evaluation shows that the tools are highly inaccurate with the overall accuracy being ~50% on all platforms. Predictions for non-binary comments on all platforms are mostly female, thus propagating the societal bias that non-binary individuals are effeminate. To address this, we fine-tune a BERT multi-label classifier on the two datasets in multiple combinations, observe an overall performance of ~77% on the most realistically deployable setting and a surprisingly higher performance of 90% for the non-binary class. We also audit ChatGPT using zero-shot prompts on a small dataset (due to high pricing) and observe an average accuracy of 58% for Reddit and Tumblr combined (with overall better results for Reddit). Thus, we show that existing systems, including highly advanced ones like ChatGPT are biased, and need better audits and moderation and, that such societal biases can be addressed and alleviated through simple off-the-shelf models like BERT trained on more gender inclusive datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge