Anastasia Miin

Optimizing Large Language Models for Dynamic Constraints through Human-in-the-Loop Discriminators

Oct 19, 2024

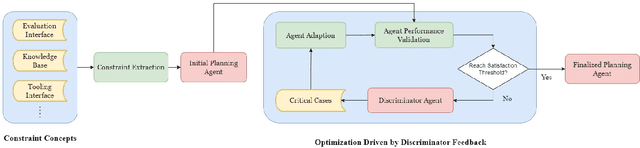

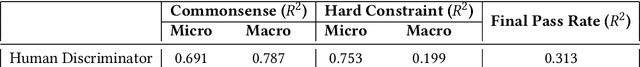

Abstract:Large Language Models (LLMs) have recently demonstrated impressive capabilities across various real-world applications. However, due to the current text-in-text-out paradigm, it remains challenging for LLMs to handle dynamic and complex application constraints, let alone devise general solutions that meet predefined system goals. Current common practices like model finetuning and reflection-based reasoning often address these issues case-by-case, limiting their generalizability. To address this issue, we propose a flexible framework that enables LLMs to interact with system interfaces, summarize constraint concepts, and continually optimize performance metrics by collaborating with human experts. As a case in point, we initialized a travel planner agent by establishing constraints from evaluation interfaces. Then, we employed both LLM-based and human discriminators to identify critical cases and continuously improve agent performance until the desired outcomes were achieved. After just one iteration, our framework achieved a $7.78\%$ pass rate with the human discriminator (a $40.2\%$ improvement over baseline) and a $6.11\%$ pass rate with the LLM-based discriminator. Given the adaptability of our proposal, we believe this framework can be applied to a wide range of constraint-based applications and lay a solid foundation for model finetuning with performance-sensitive data samples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge