Alperen Kantarci

MOCCA: Multi-Layer One-Class Classification for Anomaly Detection

Dec 09, 2020

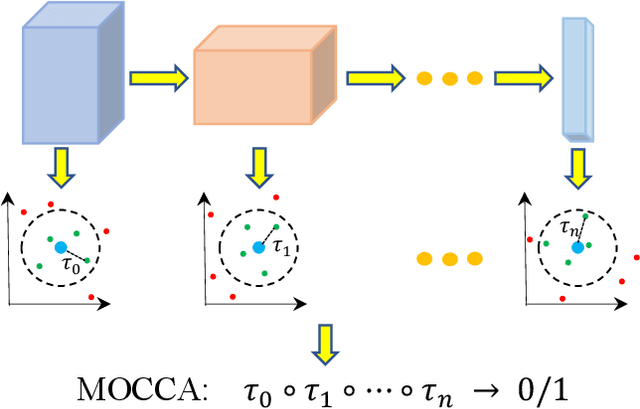

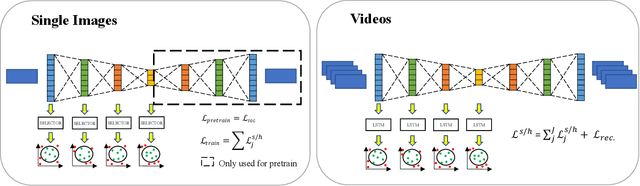

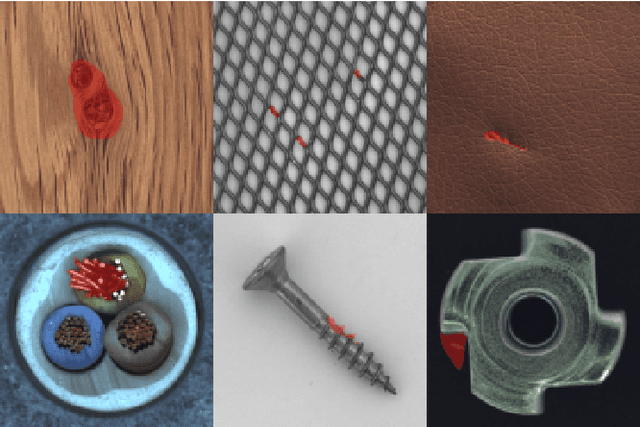

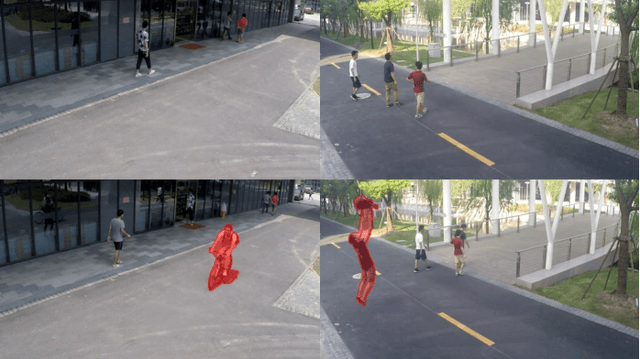

Abstract:Anomalies are ubiquitous in all scientific fields and can express an unexpected event due to incomplete knowledge about the data distribution or an unknown process that suddenly comes into play and distorts the observations. Due to such events' rarity, it is common to train deep learning models on "normal", i.e. non-anomalous, datasets only, thus letting the neural network to model the distribution beneath the input data. In this context, we propose our deep learning approach to the anomaly detection problem named Multi-LayerOne-Class Classification (MOCCA). We explicitly leverage the piece-wise nature of deep neural networks by exploiting information extracted at different depths to detect abnormal data instances. We show how combining the representations extracted from multiple layers of a model leads to higher discrimination performance than typical approaches proposed in the literature that are based neural networks' final output only. We propose to train the model by minimizing the $L_2$ distance between the input representation and a reference point, the anomaly-free training data centroid, at each considered layer. We conduct extensive experiments on publicly available datasets for anomaly detection, namely CIFAR10, MVTec AD, and ShanghaiTech, considering both the single-image and video-based scenarios. We show that our method reaches superior performances compared to the state-of-the-art approaches available in the literature. Moreover, we provide a model analysis to give insight on how our approach works.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge