Alona Sakhnenko

Is data-efficient learning feasible with quantum models?

Aug 26, 2025

Abstract:The importance of analyzing nontrivial datasets when testing quantum machine learning (QML) models is becoming increasingly prominent in literature, yet a cohesive framework for understanding dataset characteristics remains elusive. In this work, we concentrate on the size of the dataset as an indicator of its complexity and explores the potential for QML models to demonstrate superior data-efficiency compared to classical models, particularly through the lens of quantum kernel methods (QKMs). We provide a method for generating semi-artificial fully classical datasets, on which we show one of the first evidence of the existence of classical datasets where QKMs require less data during training. Additionally, our study introduces a new analytical tool to the QML domain, derived for classical kernel methods, which can be aimed at investigating the classical-quantum gap. Our empirical results reveal that QKMs can achieve low error rates with less training data compared to classical counterparts. Furthermore, our method allows for the generation of datasets with varying properties, facilitating further investigation into the characteristics of real-world datasets that may be particularly advantageous for QKMs. We also show that the predicted performance from the analytical tool we propose - a generalization metric from classical domain - show great alignment empirical evidence, which fills the gap previously existing in the field. We pave a way to a comprehensive exploration of dataset complexities, providing insights into how these complexities influence QML performance relative to traditional methods. This research contributes to a deeper understanding of the generalization benefits of QKM models and potentially a broader family of QML models, setting the stage for future advancements in the field.

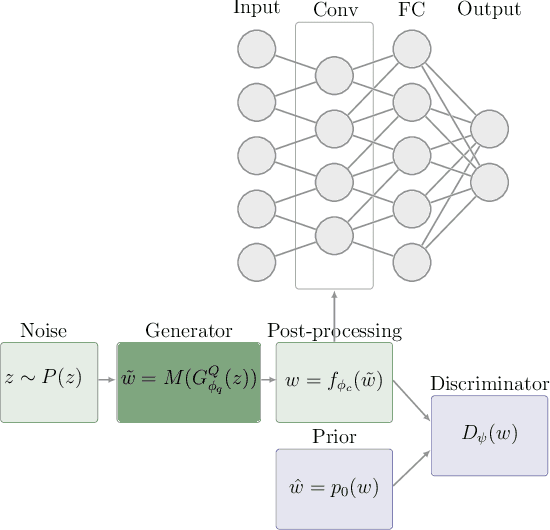

Enhancing the Scalability of Classical Surrogates for Real-World Quantum Machine Learning Applications

Aug 08, 2025Abstract:Quantum machine learning (QML) presents potential for early industrial adoption, yet limited access to quantum hardware remains a significant bottleneck for deployment of QML solutions. This work explores the use of classical surrogates to bypass this restriction, which is a technique that allows to build a lightweight classical representation of a (trained) quantum model, enabling to perform inference on entirely classical devices. We reveal prohibiting high computational demand associated with previously proposed methods for generating classical surrogates from quantum models, and propose an alternative pipeline enabling generation of classical surrogates at a larger scale than was previously possible. Previous methods required at least a high-performance computing (HPC) system for quantum models of below industrial scale (ca. 20 qubits), which raises questions about its practicality. We greatly minimize the redundancies of the previous approach, utilizing only a minute fraction of the resources previously needed. We demonstrate the effectiveness of our method on a real-world energy demand forecasting problem, conducting rigorous testing of performance and computation demand in both simulations and on quantum hardware. Our results indicate that our method achieves high accuracy on the testing dataset while its computational resource requirements scale linearly rather than exponentially. This work presents a lightweight approach to transform quantum solutions into classically deployable versions, facilitating faster integration of quantum technology in industrial settings. Furthermore, it can serve as a powerful research tool in search practical quantum advantage in an empirical setup.

Generalization Bounds in Hybrid Quantum-Classical Machine Learning Models

Apr 11, 2025

Abstract:Hybrid classical-quantum models aim to harness the strengths of both quantum computing and classical machine learning, but their practical potential remains poorly understood. In this work, we develop a unified mathematical framework for analyzing generalization in hybrid models, offering insight into how these systems learn from data. We establish a novel generalization bound of the form $O\big( \sqrt{\frac{T\log{T}}{N}} + \frac{\alpha}{\sqrt{N}}\big)$ for $N$ training data points, $T$ trainable quantum gates, and bounded fully-connected layers $||F|| \leq \alpha$. This bound decomposes cleanly into quantum and classical contributions, extending prior work on both components and clarifying their interaction. We apply our results to the quantum-classical convolutional neural network (QCCNN), an architecture that integrates quantum convolutional layers with classical processing. Alongside the bound, we highlight conceptual limitations of applying classical statistical learning theory in the hybrid setting and suggest promising directions for future theoretical work.

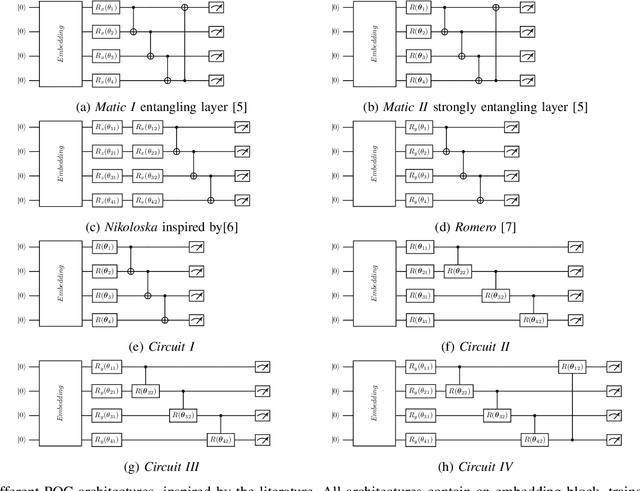

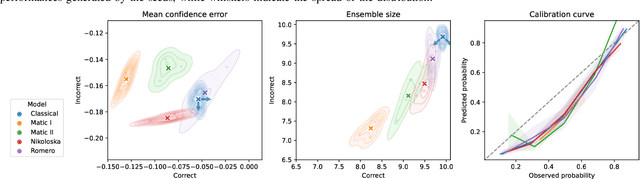

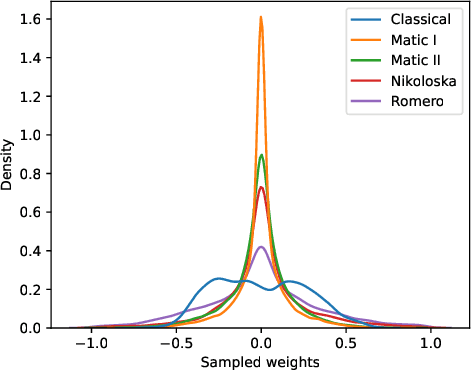

Building Continuous Quantum-Classical Bayesian Neural Networks for a Classical Clinical Dataset

Jun 10, 2024

Abstract:In this work, we are introducing a Quantum-Classical Bayesian Neural Network (QCBNN) that is capable to perform uncertainty-aware classification of classical medical dataset. This model is a symbiosis of a classical Convolutional NN that performs ultra-sound image processing and a quantum circuit that generates its stochastic weights, within a Bayesian learning framework. To test the utility of this idea for the possible future deployment in the medical sector we track multiple behavioral metrics that capture both predictive performance as well as model's uncertainty. It is our ambition to create a hybrid model that is capable to classify samples in a more uncertainty aware fashion, which will advance the trustworthiness of these models and thus bring us step closer to utilizing them in the industry. We test multiple setups for quantum circuit for this task, and our best architectures display bigger uncertainty gap between correctly and incorrectly identified samples than its classical benchmark at an expense of a slight drop in predictive performance. The innovation of this paper is two-fold: (1) combining of different approaches that allow the stochastic weights from the quantum circuit to be continues thus allowing the model to classify application-driven dataset; (2) studying architectural features of quantum circuit that make-or-break these models, which pave the way into further investigation of more informed architectural designs.

A Hyperparameter Study for Quantum Kernel Methods

Oct 18, 2023Abstract:Quantum kernel methods are a promising method in quantum machine learning thanks to the guarantees connected to them. Their accessibility for analytic considerations also opens up the possibility of prescreening datasets based on their potential for a quantum advantage. To do so, earlier works developed the geometric difference, which can be understood as a closeness measure between two kernel-based machine learning approaches, most importantly between a quantum kernel and classical kernel. This metric links the quantum and classical model complexities. Therefore, it raises the question of whether the geometric difference, based on its relation to model complexity, can be a useful tool in evaluations other than for the potential for quantum advantage. In this work, we investigate the effects of hyperparameter choice on the model performance and the generalization gap between classical and quantum kernels. The importance of hyperparameter optimization is well known also for classical machine learning. Especially for the quantum Hamiltonian evolution feature map, the scaling of the input data has been shown to be crucial. However, there are additional parameters left to be optimized, like the best number of qubits to trace out before computing a projected quantum kernel. We investigate the influence of these hyperparameters and compare the classically reliable method of cross validation with the method of choosing based on the geometric difference. Based on the thorough investigation of the hyperparameters across 11 datasets we identified commodities that can be exploited when examining a new dataset. In addition, our findings contribute to better understanding of the applicability of the geometric difference.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge