Alison Lesley Marsden

Full-field surrogate modeling of cardiac function encoding geometric variability

Apr 29, 2025Abstract:Combining physics-based modeling with data-driven methods is critical to enabling the translation of computational methods to clinical use in cardiology. The use of rigorous differential equations combined with machine learning tools allows for model personalization with uncertainty quantification in time frames compatible with clinical practice. However, accurate and efficient surrogate models of cardiac function, built from physics-based numerical simulation, are still mostly geometry-specific and require retraining for different patients and pathological conditions. We propose a novel computational pipeline to embed cardiac anatomies into full-field surrogate models. We generate a dataset of electrophysiology simulations using a complex multi-scale mathematical model coupling partial and ordinary differential equations. We adopt Branched Latent Neural Maps (BLNMs) as an effective scientific machine learning method to encode activation maps extracted from physics-based numerical simulations into a neural network. Leveraging large deformation diffeomorphic metric mappings, we build a biventricular anatomical atlas and parametrize the anatomical variability of a small and challenging cohort of 13 pediatric patients affected by Tetralogy of Fallot. We propose a novel statistical shape modeling based z-score sampling approach to generate a new synthetic cohort of 52 biventricular geometries that are compatible with the original geometrical variability. This synthetic cohort acts as the training set for BLNMs. Our surrogate model demonstrates robustness and great generalization across the complex original patient cohort, achieving an average adimensional mean squared error of 0.0034. The Python implementation of our BLNM model is publicly available under MIT License at https://github.com/StanfordCBCL/BLNM.

Branched Latent Neural Operators

Aug 04, 2023

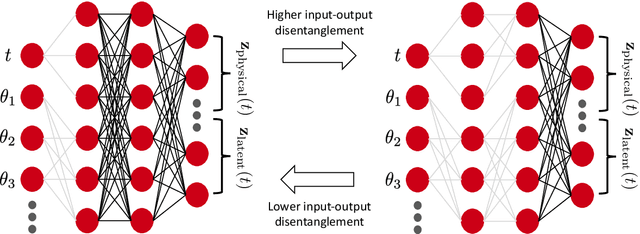

Abstract:We introduce Branched Latent Neural Operators (BLNOs) to learn input-output maps encoding complex physical processes. A BLNO is defined by a simple and compact feedforward partially-connected neural network that structurally disentangles inputs with different intrinsic roles, such as the time variable from model parameters of a differential equation, while transferring them into a generic field of interest. BLNOs leverage interpretable latent outputs to enhance the learned dynamics and break the curse of dimensionality by showing excellent generalization properties with small training datasets and short training times on a single processor. Indeed, their generalization error remains comparable regardless of the adopted discretization during the testing phase. Moreover, the partial connections, in place of a fully-connected structure, significantly reduce the number of tunable parameters. We show the capabilities of BLNOs in a challenging test case involving biophysically detailed electrophysiology simulations in a biventricular cardiac model of a pediatric patient with hypoplastic left heart syndrome. The model includes a purkinje network for fast conduction and a heart-torso geometry. Specifically, we trained BLNOs on 150 in silico generated 12-lead electrocardiograms (ECGs) while spanning 7 model parameters, covering cell-scale, organ-level and electrical dyssynchrony. Although the 12-lead ECGs manifest very fast dynamics with sharp gradients, after automatic hyperparameter tuning the optimal BLNO, trained in less than 3 hours on a single CPU, retains just 7 hidden layers and 19 neurons per layer. The mean square error is on the order of $10^{-4}$ on an independent test dataset comprised of 50 additional electrophysiology simulations. This paper provides a novel computational tool to build reliable and efficient reduced-order models for digital twinning in engineering applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge