Alfio Ferrara

Unveiling Transformer Perception by Exploring Input Manifolds

Oct 08, 2024Abstract:This paper introduces a general method for the exploration of equivalence classes in the input space of Transformer models. The proposed approach is based on sound mathematical theory which describes the internal layers of a Transformer architecture as sequential deformations of the input manifold. Using eigendecomposition of the pullback of the distance metric defined on the output space through the Jacobian of the model, we are able to reconstruct equivalence classes in the input space and navigate across them. We illustrate how this method can be used as a powerful tool for investigating how a Transformer sees the input space, facilitating local and task-agnostic explainability in Computer Vision and Natural Language Processing tasks.

LiMe: a Latin Corpus of Late Medieval Criminal Sentences

Apr 19, 2024Abstract:The Latin language has received attention from the computational linguistics research community, which has built, over the years, several valuable resources, ranging from detailed annotated corpora to sophisticated tools for linguistic analysis. With the recent advent of large language models, researchers have also started developing models capable of generating vector representations of Latin texts. The performances of such models remain behind the ones for modern languages, given the disparity in available data. In this paper, we present the LiMe dataset, a corpus of 325 documents extracted from a series of medieval manuscripts called Libri sententiarum potestatis Mediolani, and thoroughly annotated by experts, in order to be employed for masked language model, as well as supervised natural language processing tasks.

How BERT Speaks Shakespearean English? Evaluating Historical Bias in Contextual Language Models

Feb 07, 2024Abstract:In this paper, we explore the idea of analysing the historical bias of contextual language models based on BERT by measuring their adequacy with respect to Early Modern (EME) and Modern (ME) English. In our preliminary experiments, we perform fill-in-the-blank tests with 60 masked sentences (20 EME-specific, 20 ME-specific and 20 generic) and three different models (i.e., BERT Base, MacBERTh, English HLM). We then rate the model predictions according to a 5-point bipolar scale between the two language varieties and derive a weighted score to measure the adequacy of each model to EME and ME varieties of English.

Incremental Affinity Propagation based on Cluster Consolidation and Stratification

Jan 25, 2024Abstract:Modern data mining applications require to perform incremental clustering over dynamic datasets by tracing temporal changes over the resulting clusters. In this paper, we propose A-Posteriori affinity Propagation (APP), an incremental extension of Affinity Propagation (AP) based on cluster consolidation and cluster stratification to achieve faithfulness and forgetfulness. APP enforces incremental clustering where i) new arriving objects are dynamically consolidated into previous clusters without the need to re-execute clustering over the entire dataset of objects, and ii) a faithful sequence of clustering results is produced and maintained over time, while allowing to forget obsolete clusters with decremental learning functionalities. Four popular labeled datasets are used to test the performance of APP with respect to benchmark clustering performances obtained by conventional AP and Incremental Affinity Propagation based on Nearest neighbor Assignment (IAPNA) algorithms. Experimental results show that APP achieves comparable clustering performance while enforcing scalability at the same time.

An Explainable Probabilistic Classifier for Categorical Data Inspired to Quantum Physics

May 26, 2021

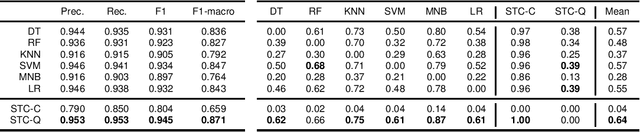

Abstract:This paper presents Sparse Tensor Classifier (STC), a supervised classification algorithm for categorical data inspired by the notion of superposition of states in quantum physics. By regarding an observation as a superposition of features, we introduce the concept of wave-particle duality in machine learning and propose a generalized framework that unifies the classical and the quantum probability. We show that STC possesses a wide range of desirable properties not available in most other machine learning methods but it is at the same time exceptionally easy to comprehend and use. Empirical evaluation of STC on structured data and text classification demonstrates that our methodology achieves state-of-the-art performances compared to both standard classifiers and deep learning, at the additional benefit of requiring minimal data pre-processing and hyper-parameter tuning. Moreover, STC provides a native explanation of its predictions both for single instances and for each target label globally.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge