Alexander Boer

Probing for Knowledge Attribution in Large Language Models

Feb 26, 2026Abstract:Large language models (LLMs) often generate fluent but unfounded claims, or hallucinations, which fall into two types: (i) faithfulness violations - misusing user context - and (ii) factuality violations - errors from internal knowledge. Proper mitigation depends on knowing whether a model's answer is based on the prompt or its internal weights. This work focuses on the problem of contributive attribution: identifying the dominant knowledge source behind each output. We show that a probe, a simple linear classifier trained on model hidden representations, can reliably predict contributive attribution. For its training, we introduce AttriWiki, a self-supervised data pipeline that prompts models to recall withheld entities from memory or read them from context, generating labelled examples automatically. Probes trained on AttriWiki data reveal a strong attribution signal, achieving up to 0.96 Macro-F1 on Llama-3.1-8B, Mistral-7B, and Qwen-7B, transferring to out-of-domain benchmarks (SQuAD, WebQuestions) with 0.94-0.99 Macro-F1 without retraining. Attribution mismatches raise error rates by up to 70%, demonstrating a direct link between knowledge source confusion and unfaithful answers. Yet, models may still respond incorrectly even when attribution is correct, highlighting the need for broader detection frameworks.

The Role of Normware in Trustworthy and Explainable AI

Dec 06, 2018

Abstract:For being potentially destructive, in practice incomprehensible and for the most unintelligible, contemporary technology is setting high challenges on our society. New conception methods are urgently required. Reorganizing ideas and discussions presented in AI and related fields, this position paper aims to highlight the importance of normware--that is, computational artifacts specifying norms--with respect to these issues, and argues for its irreducibility with respect to software by making explicit its neglected ecological dimension in the decision-making cycle.

Institutional Metaphors for Designing Large-Scale Distributed AI versus AI Techniques for Running Institutions

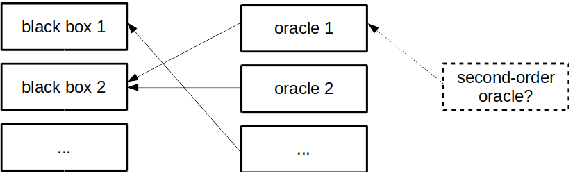

Mar 09, 2018Abstract:Artificial Intelligence (AI) started out with an ambition to reproduce the human mind, but, as the sheer scale of that ambition became apparent, quickly retreated into either studying specialized intelligent behaviours, or proposing overarching architectural concepts for interfacing specialized intelligent behaviour components, conceived of as agents in a kind of organization. This agent-based modeling paradigm, in turn, proves to have interesting applications in understanding, simulating, and predicting the behaviour of social and legal structures on an aggregate level. This chapter examines a number of relevant cross-cutting concerns, conceptualizations, modeling problems and design challenges in large-scale distributed Artificial Intelligence, as well as in institutional systems, and identifies potential grounds for novel advances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge