Albert T. Young

Robust Semantic Interpretability: Revisiting Concept Activation Vectors

Apr 06, 2021

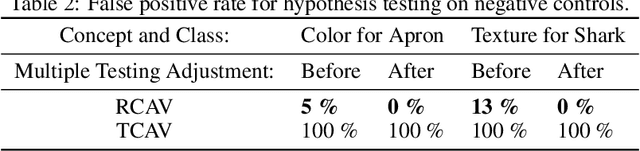

Abstract:Interpretability methods for image classification assess model trustworthiness by attempting to expose whether the model is systematically biased or attending to the same cues as a human would. Saliency methods for feature attribution dominate the interpretability literature, but these methods do not address semantic concepts such as the textures, colors, or genders of objects within an image. Our proposed Robust Concept Activation Vectors (RCAV) quantifies the effects of semantic concepts on individual model predictions and on model behavior as a whole. RCAV calculates a concept gradient and takes a gradient ascent step to assess model sensitivity to the given concept. By generalizing previous work on concept activation vectors to account for model non-linearity, and by introducing stricter hypothesis testing, we show that RCAV yields interpretations which are both more accurate at the image level and robust at the dataset level. RCAV, like saliency methods, supports the interpretation of individual predictions. To evaluate the practical use of interpretability methods as debugging tools, and the scientific use of interpretability methods for identifying inductive biases (e.g. texture over shape), we construct two datasets and accompanying metrics for realistic benchmarking of semantic interpretability methods. Our benchmarks expose the importance of counterfactual augmentation and negative controls for quantifying the practical usability of interpretability methods.

Global Saliency: Aggregating Saliency Maps to Assess Dataset Artefact Bias

Oct 16, 2019

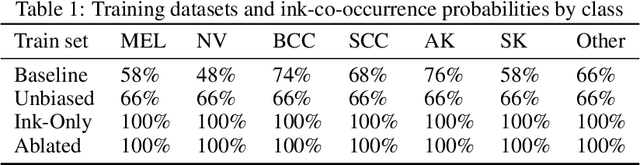

Abstract:In high-stakes applications of machine learning models, interpretability methods provide guarantees that models are right for the right reasons. In medical imaging, saliency maps have become the standard tool for determining whether a neural model has learned relevant robust features, rather than artefactual noise. However, saliency maps are limited to local model explanation because they interpret predictions on an image-by-image basis. We propose aggregating saliency globally, using semantic segmentation masks, to provide quantitative measures of model bias across a dataset. To evaluate global saliency methods, we propose two metrics for quantifying the validity of saliency explanations. We apply the global saliency method to skin lesion diagnosis to determine the effect of artefacts, such as ink, on model bias.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge