Ailton Oliveira

Machine Learning-Based mmWave MIMO Beam Tracking in V2I Scenarios: Algorithms and Datasets

Dec 06, 2024Abstract:This work investigates the use of machine learning applied to the beam tracking problem in 5G networks and beyond. The goal is to decrease the overhead associated to MIMO millimeter wave beamforming. In comparison to beam selection (also called initial beam acquisition), ML-based beam tracking is less investigated in the literature due to factors such as the lack of comprehensive datasets. One of the contributions of this work is a new public multimodal dataset, which includes images, LIDAR information and GNSS positioning, enabling the evaluation of new data fusion algorithms applied to wireless communications. The work also contributes with an evaluation of the performance of beam tracking algorithms, and associated methodology. When considering as inputs the LIDAR data, the coordinates and the information from previously selected beams, the proposed deep neural network based on ResNet and using LSTM layers, significantly outperformed the other beam tracking models.

* 5 pages, conference: 2024 IEEE Latin-American Conference on Communications (LATINCOM)

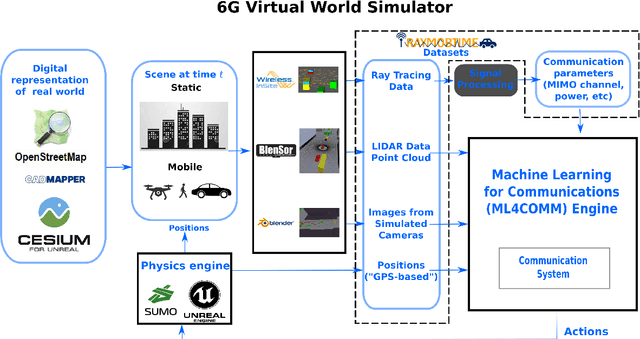

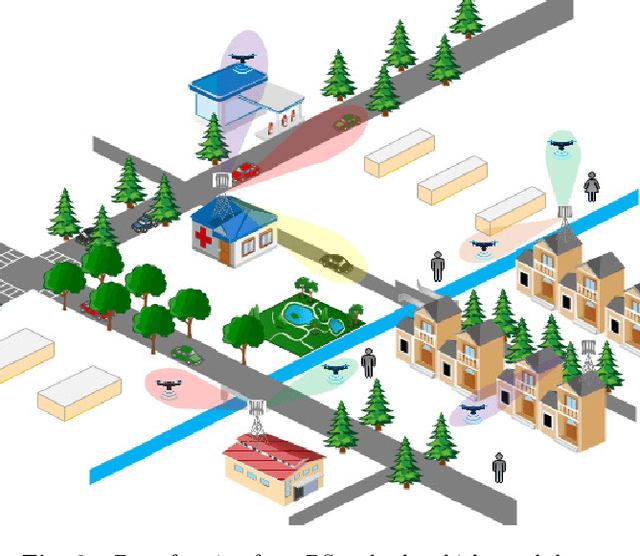

Simulation of machine learning-based 6G systems in virtual worlds

Apr 15, 2022

Abstract:Digital representations of the real world are being used in many applications, such as augmented reality. 6G systems will not only support use cases that rely on virtual worlds but also benefit from their rich contextual information to improve performance and reduce communication overhead. This paper focuses on the simulation of 6G systems that rely on a 3D representation of the environment, as captured by cameras and other sensors. We present new strategies for obtaining paired MIMO channels and multimodal data. We also discuss trade-offs between speed and accuracy when generating channels via ray tracing. We finally provide beam selection simulation results to assess the proposed methodology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge