Abu Saleh Musa Miah1

A Comprehensive Methodological Survey of Human Activity Recognition Across Divers Data Modalities

Sep 15, 2024

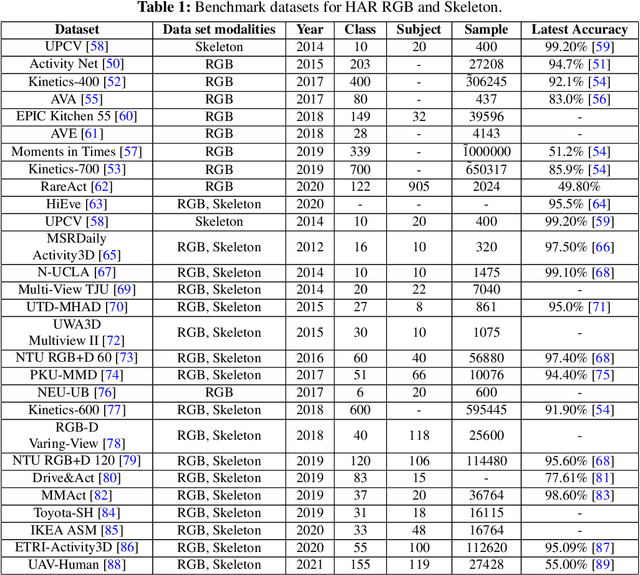

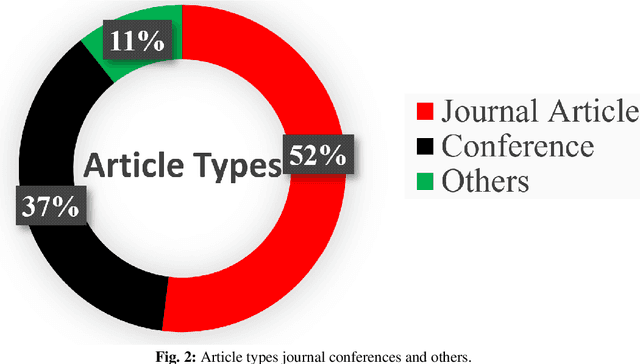

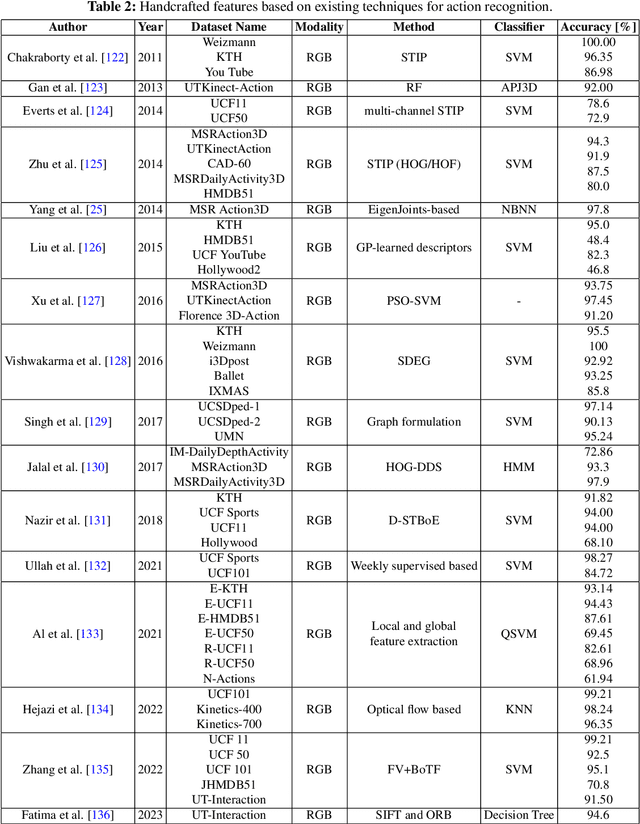

Abstract:Human Activity Recognition (HAR) systems aim to understand human behaviour and assign a label to each action, attracting significant attention in computer vision due to their wide range of applications. HAR can leverage various data modalities, such as RGB images and video, skeleton, depth, infrared, point cloud, event stream, audio, acceleration, and radar signals. Each modality provides unique and complementary information suited to different application scenarios. Consequently, numerous studies have investigated diverse approaches for HAR using these modalities. This paper presents a comprehensive survey of the latest advancements in HAR from 2014 to 2024, focusing on machine learning (ML) and deep learning (DL) approaches categorized by input data modalities. We review both single-modality and multi-modality techniques, highlighting fusion-based and co-learning frameworks. Additionally, we cover advancements in hand-crafted action features, methods for recognizing human-object interactions, and activity detection. Our survey includes a detailed dataset description for each modality and a summary of the latest HAR systems, offering comparative results on benchmark datasets. Finally, we provide insightful observations and propose effective future research directions in HAR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge