Abhijith Srinivas Bidaralli

Defending Against Stealthy Backdoor Attacks

May 27, 2022

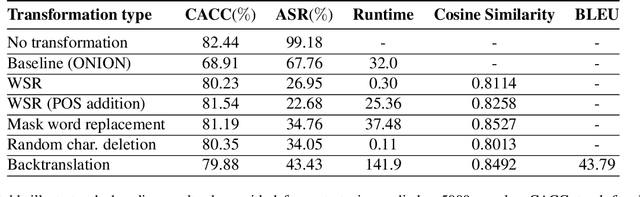

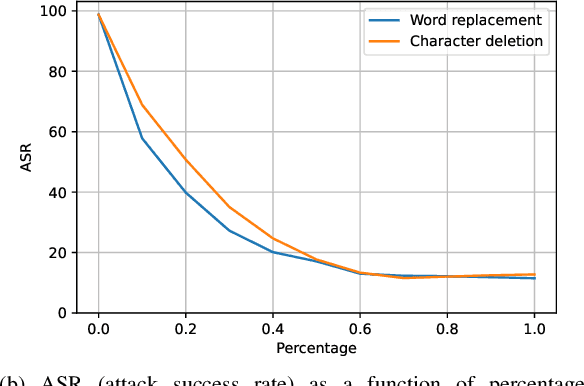

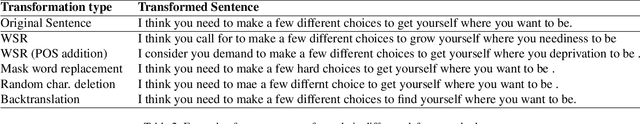

Abstract:Defenses against security threats have been an interest of recent studies. Recent works have shown that it is not difficult to attack a natural language processing (NLP) model while defending against them is still a cat-mouse game. Backdoor attacks are one such attack where a neural network is made to perform in a certain way on specific samples containing some triggers while achieving normal results on other samples. In this work, we present a few defense strategies that can be useful to counter against such an attack. We show that our defense methodologies significantly decrease the performance on the attacked inputs while maintaining similar performance on benign inputs. We also show that some of our defenses have very less runtime and also maintain similarity with the original inputs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge