Abdelaziz Kallel

Olive Tree Satellite Image Segmentation Based On SAM and Multi-Phase Refinement

Aug 28, 2025

Abstract:In the context of proven climate change, maintaining olive biodiversity through early anomaly detection and treatment using remote sensing technology is crucial, offering effective management solutions. This paper presents an innovative approach to olive tree segmentation from satellite images. By leveraging foundational models and advanced segmentation techniques, the study integrates the Segment Anything Model (SAM) to accurately identify and segment olive trees in agricultural plots. The methodology includes SAM segmentation and corrections based on trees alignement in the field and a learanble constraint about the shape and the size. Our approach achieved a 98\% accuracy rate, significantly surpassing the initial SAM performance of 82\%.

Cross-Attention Fusion of Visual and Geometric Features for Large Vocabulary Arabic Lipreading

Feb 18, 2024

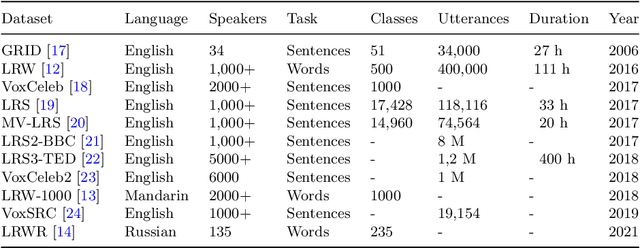

Abstract:Lipreading involves using visual data to recognize spoken words by analyzing the movements of the lips and surrounding area. It is a hot research topic with many potential applications, such as human-machine interaction and enhancing audio speech recognition. Recent deep-learning based works aim to integrate visual features extracted from the mouth region with landmark points on the lip contours. However, employing a simple combination method such as concatenation may not be the most effective approach to get the optimal feature vector. To address this challenge, firstly, we propose a cross-attention fusion-based approach for large lexicon Arabic vocabulary to predict spoken words in videos. Our method leverages the power of cross-attention networks to efficiently integrate visual and geometric features computed on the mouth region. Secondly, we introduce the first large-scale Lip Reading in the Wild for Arabic (LRW-AR) dataset containing 20,000 videos for 100-word classes, uttered by 36 speakers. The experimental results obtained on LRW-AR and ArabicVisual databases showed the effectiveness and robustness of the proposed approach in recognizing Arabic words. Our work provides insights into the feasibility and effectiveness of applying lipreading techniques to the Arabic language, opening doors for further research in this field. Link to the project page: https://crns-smartvision.github.io/lrwar

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge