AbdElRahman A. ElSaid

The Ant Swarm Neuro-Evolution Procedure for Optimizing Recurrent Networks

Sep 27, 2019

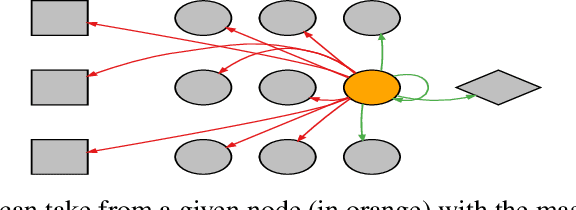

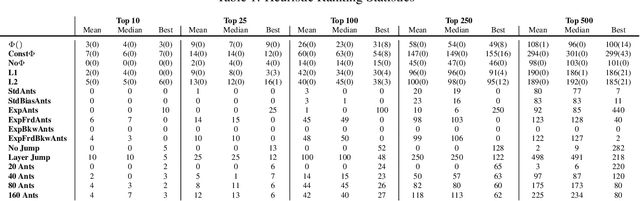

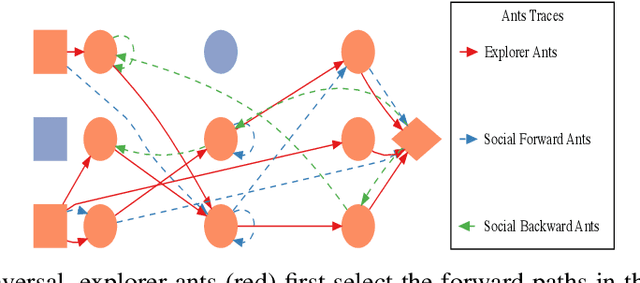

Abstract:Hand-crafting effective and efficient structures for recurrent neural networks (RNNs) is a difficult, expensive, and time-consuming process. To address this challenge, we propose a novel neuro-evolution algorithm based on ant colony optimization (ACO), called ant swarm neuro-evolution (ASNE), for directly optimizing RNN topologies. The procedure selects from multiple modern recurrent cell types such as Delta-RNN, GRU, LSTM, MGU and UGRNN cells, as well as recurrent connections which may span multiple layers and/or steps of time. In order to introduce an inductive bias that encourages the formation of sparser synaptic connectivity patterns, we investigate several variations of the core algorithm. We do so primarily by formulating different functions that drive the underlying pheromone simulation process (which mimic L1 and L2 regularization in standard machine learning) as well as by introducing ant agents with specialized roles (inspired by how real ant colonies operate), i.e., explorer ants that construct the initial feed forward structure and social ants which select nodes from the feed forward connections to subsequently craft recurrent memory structures. We also incorporate a Lamarckian strategy for weight initialization which reduces the number of backpropagation epochs required to locally train candidate RNNs, speeding up the neuro-evolution process. Our results demonstrate that the sparser RNNs evolved by ASNE significantly outperform traditional one and two layer architectures consisting of modern memory cells, as well as the well-known NEAT algorithm. Furthermore, we improve upon prior state-of-the-art results on the time series dataset utilized in our experiments.

An Empirical Exploration of Deep Recurrent Connections and Memory Cells Using Neuro-Evolution

Sep 27, 2019

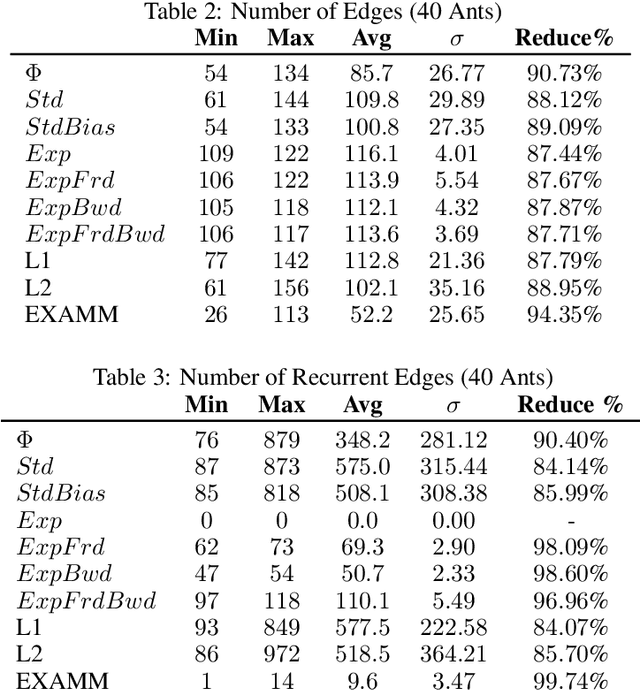

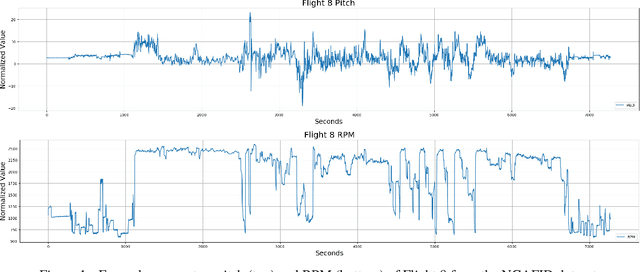

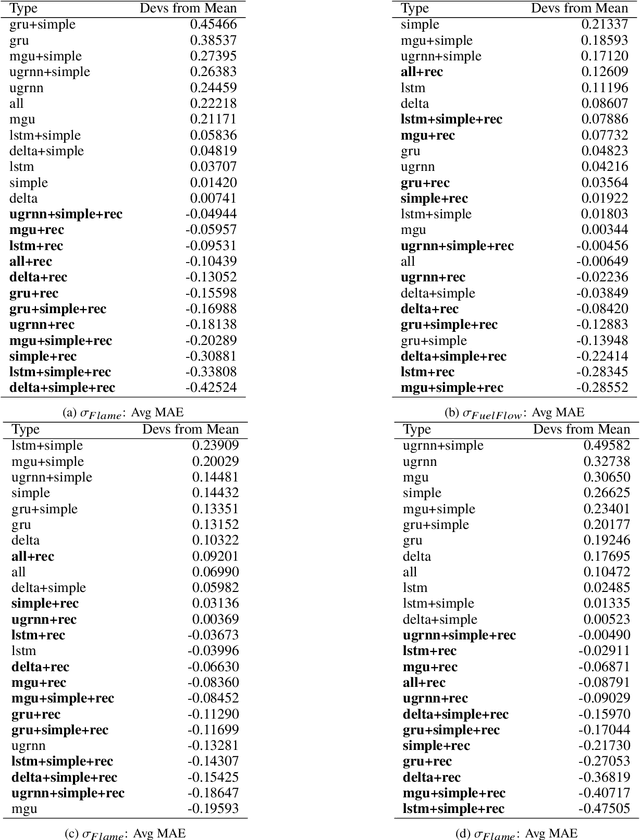

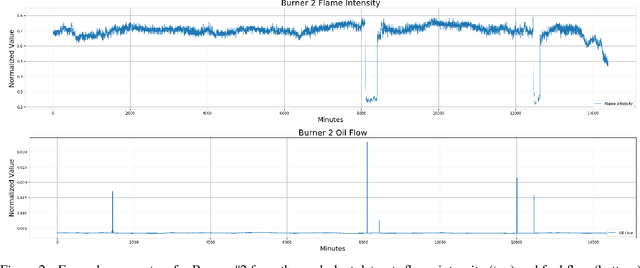

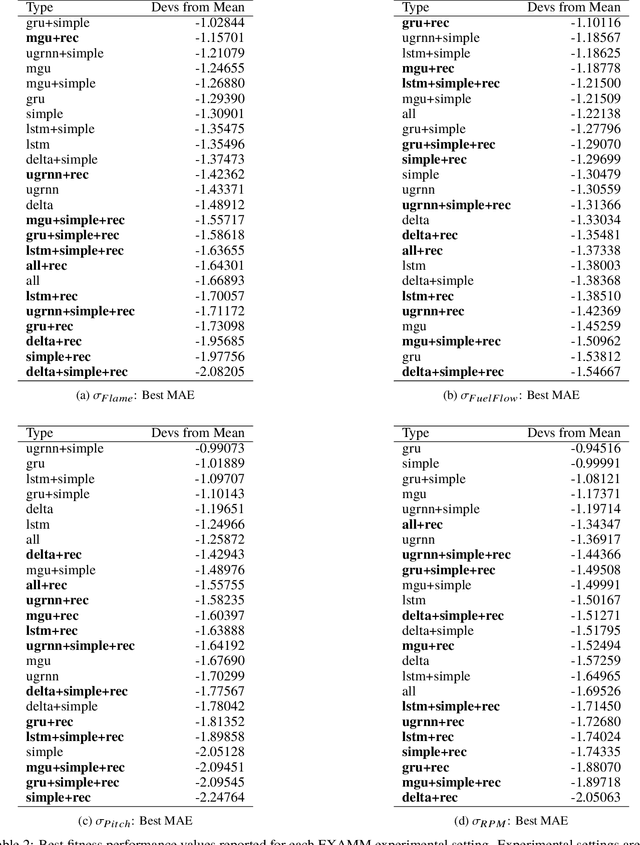

Abstract:Neuro-evolution and neural architecture search algorithms have gained increasing interest due to the challenges involved in designing optimal artificial neural networks (ANNs). While these algorithms have been shown to possess the potential to outperform the best human crafted architectures, a less common use of them is as a tool for analysis of ANN structural components and connectivity structures. In this work, we focus on this particular use-case to develop a rigorous examination and comparison framework for analyzing recurrent neural networks (RNNs) applied to time series prediction using the novel neuro-evolutionary process known as Evolutionary eXploration of Augmenting Memory Models (EXAMM). Specifically, we use our EXAMM-based analysis to investigate the capabilities of recurrent memory cells and the generalization ability afforded by various complex recurrent connectivity patterns that span one or more steps in time, i.e., deep recurrent connections. EXAMM, in this study, was used to train over 10.56 million RNNs in 5,280 repeated experiments with varying components. While many modern, often hand-crafted RNNs rely on complex memory cells (which have internal recurrent connections that only span a single time step) operating under the assumption that these sufficiently latch information and handle long term dependencies, our results show that networks evolved with deep recurrent connections perform significantly better than those without. More importantly, in some cases, the best performing RNNs consisted of only simple neurons and deep time skip connections, without any memory cells. These results strongly suggest that utilizing deep time skip connections in RNNs for time series data prediction not only deserves further, dedicated study, but also demonstrate the potential of neuro-evolution as a means to better study, understand, and train effective RNNs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge