Zero Cost Improvements for General Object Detection Network

Paper and Code

Nov 16, 2020

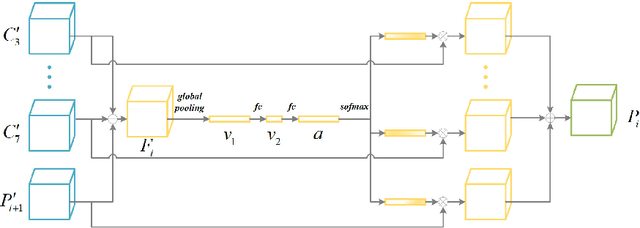

Modern object detection networks pursuit higher precision on general object detection datasets, at the same time the computation burden is also increasing along with the improvement of precision. Nevertheless, the inference time and precision are both critical to object detection system which needs to be real-time. It is necessary to research precision improvement without extra computation cost. In this work, two modules are proposed to improve detection precision with zero cost, which are focus on FPN and detection head improvement for general object detection networks. We employ the scale attention mechanism to efficiently fuse multi-level feature maps with less parameters, which is called SA-FPN module. Considering the correlation of classification head and regression head, we use sequential head to take the place of widely-used parallel head, which is called Seq-HEAD module. To evaluate the effectiveness, we apply the two modules to some modern state-of-art object detection networks, including anchor-based and anchor-free. Experiment results on coco dataset show that the networks with the two modules can surpass original networks by 1.1 AP and 0.8 AP with zero cost for anchor-based and anchor-free networks, respectively. Code will be available at https://git.io/JTFGl.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge