Why model why? Assessing the strengths and limitations of LIME

Paper and Code

Nov 30, 2020

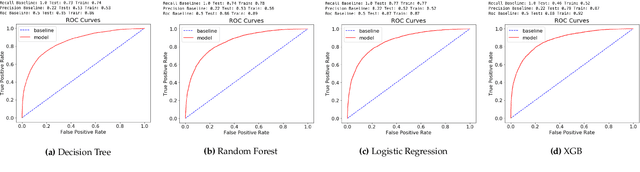

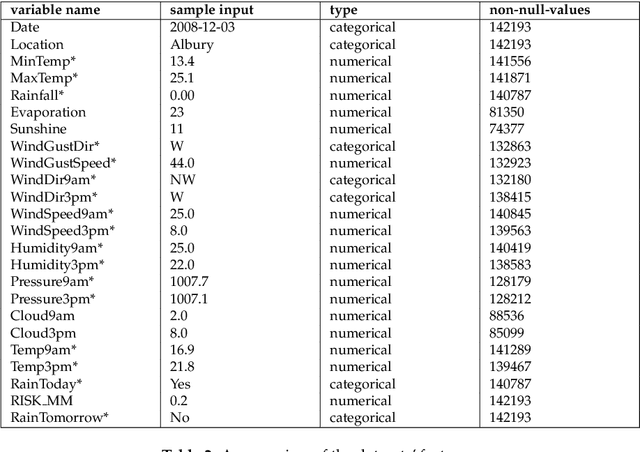

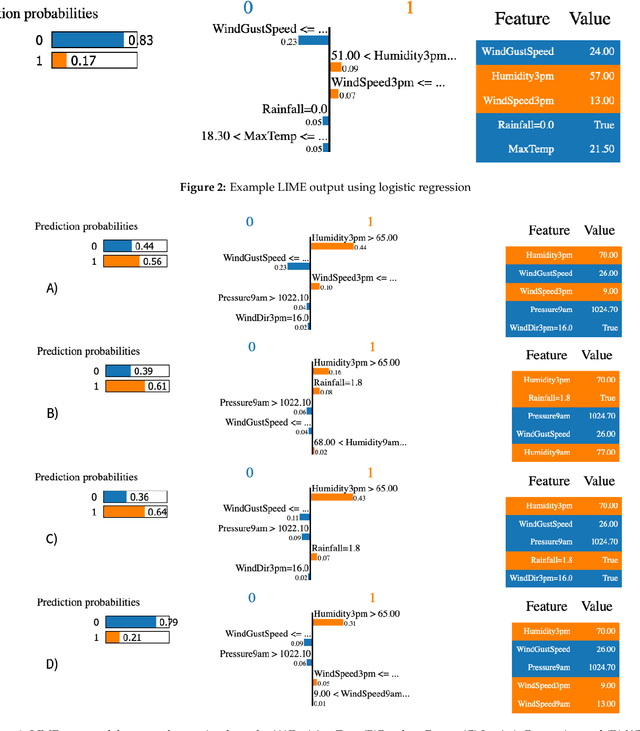

When it comes to complex machine learning models, commonly referred to as black boxes, understanding the underlying decision making process is crucial for domains such as healthcare and financial services, and also when it is used in connection with safety critical systems such as autonomous vehicles. As such interest in explainable artificial intelligence (xAI) tools and techniques has increased in recent years. However, the effectiveness of existing xAI frameworks, especially concerning algorithms that work with data as opposed to images, is still an open research question. In order to address this gap, in this paper we examine the effectiveness of the Local Interpretable Model-Agnostic Explanations (LIME) xAI framework, one of the most popular model agnostic frameworks found in the literature, with a specific focus on its performance in terms of making tabular models more interpretable. In particular, we apply several state of the art machine learning algorithms on a tabular dataset, and demonstrate how LIME can be used to supplement conventional performance assessment methods. In addition, we evaluate the understandability of the output produced by LIME both via a usability study, involving participants who are not familiar with LIME, and its overall usability via an assessment framework, which is derived from the International Organisation for Standardisation 9241-11:1998 standard.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge