What and How Are We Reporting in HRI? A Review and Recommendations for Reporting Recruitment, Compensation, and Gender

Paper and Code

Jan 22, 2022

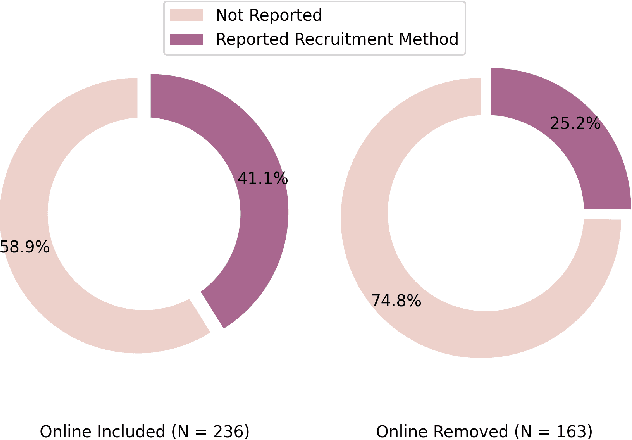

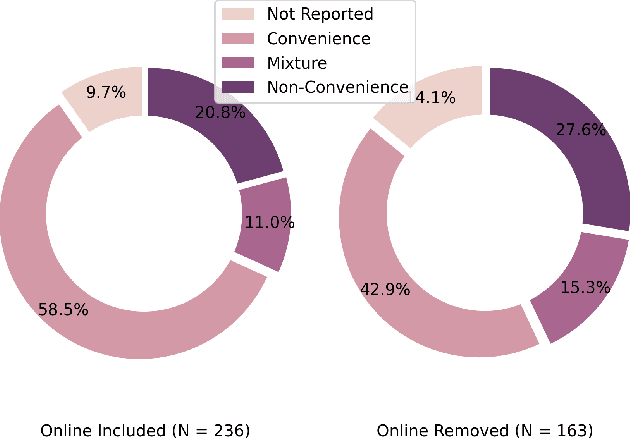

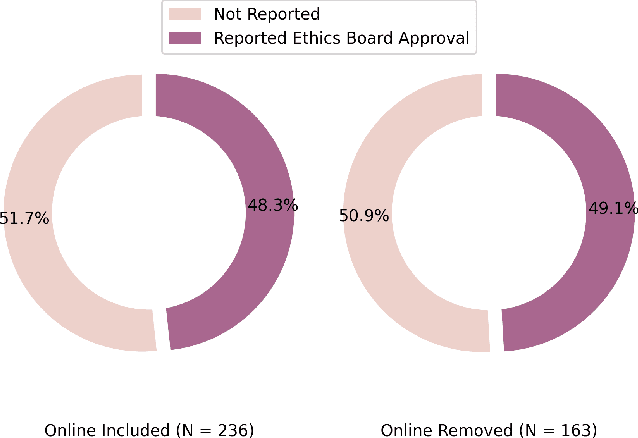

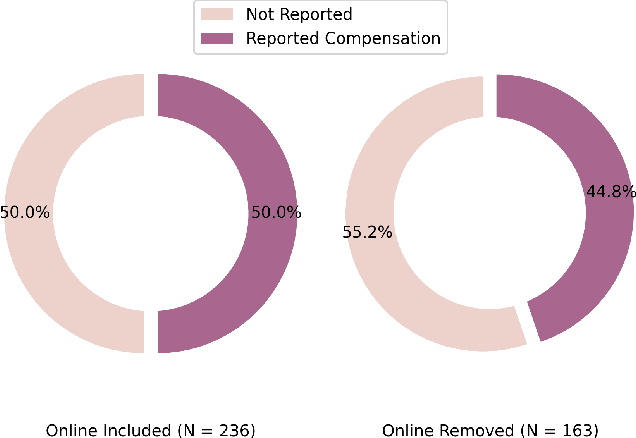

Study reproducibility and generalizability of results to broadly inclusive populations is crucial in any research. Previous meta-analyses in HRI have focused on the consistency of reported information from papers in various categories. However, members of the HRI community have noted that much of the information needed for reproducible and generalizable studies is not found in published papers. We address this issue by surveying the reported study metadata over the past three years (2019 through 2021) of the main proceedings of the International Conference on Human-Robot Interaction (HRI) as well as alt.HRI. Based on the analysis results, we propose a set of recommendations for the HRI community that follow the longer-standing reporting guidelines from human-computer interaction (HCI), psychology, and other fields most related to HRI. Finally, we examine three key areas for user study reproducibility: recruitment details, participant compensation, and participant gender. We find a lack of reporting within each of these study metadata categories: of the 236 studies, 139 studies failed to report recruitment method, 118 studies failed to report compensation, and 62 studies failed to report gender data. This analysis therefore provides guidance about specific types of needed reporting improvements for HRI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge