WARP-LCA: Efficient Convolutional Sparse Coding with Locally Competitive Algorithm

Paper and Code

Oct 24, 2024

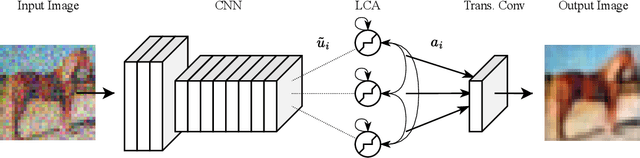

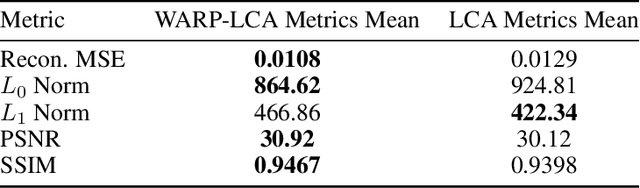

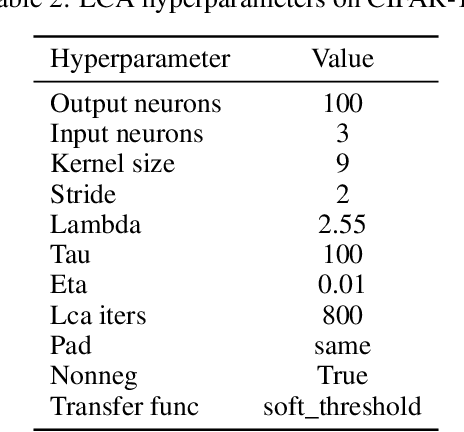

The locally competitive algorithm (LCA) can solve sparse coding problems across a wide range of use cases. Recently, convolution-based LCA approaches have been shown to be highly effective for enhancing robustness for image recognition tasks in vision pipelines. To additionally maximize representational sparsity, LCA with hard-thresholding can be applied. While this combination often yields very good solutions satisfying an $\ell_0$ sparsity criterion, it comes with significant drawbacks for practical application: (i) LCA is very inefficient, typically requiring hundreds of optimization cycles for convergence; (ii) the use of hard-thresholding results in a non-convex loss function, which might lead to suboptimal minima. To address these issues, we propose the Locally Competitive Algorithm with State Warm-up via Predictive Priming (WARP-LCA), which leverages a predictor network to provide a suitable initial guess of the LCA state based on the current input. Our approach significantly improves both convergence speed and the quality of solutions, while maintaining and even enhancing the overall strengths of LCA. We demonstrate that WARP-LCA converges faster by orders of magnitude and reaches better minima compared to conventional LCA. Moreover, the learned representations are more sparse and exhibit superior properties in terms of reconstruction and denoising quality as well as robustness when applied in deep recognition pipelines. Furthermore, we apply WARP-LCA to image denoising tasks, showcasing its robustness and practical effectiveness. Our findings confirm that the naive use of LCA with hard-thresholding results in suboptimal minima, whereas initializing LCA with a predictive guess results in better outcomes. This research advances the field of biologically inspired deep learning by providing a novel approach to convolutional sparse coding.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge