Visual Summary of Value-level Feature Attribution in Prediction Classes with Recurrent Neural Networks

Paper and Code

Jan 23, 2020

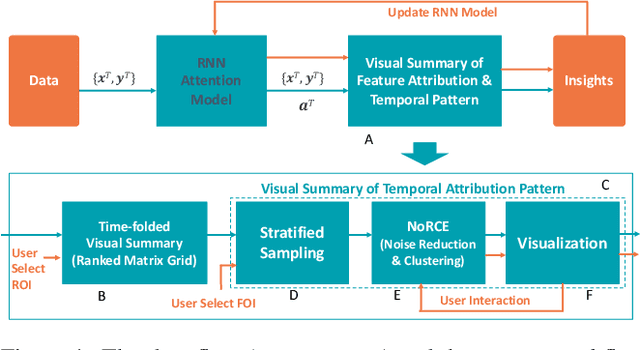

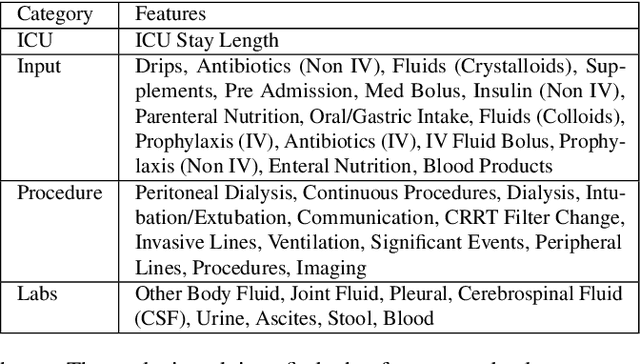

Deep Recurrent Neural Networks (RNN) is increasingly used in decision-making with temporal sequences. However, understanding how RNN models produce final predictions remains a major challenge. Existing work on interpreting RNN models for sequence predictions often focuses on explaining predictions for individual data instances (e.g., patients or students). Because state-of-the-art predictive models are formed with millions of parameters optimized over millions of instances, explaining predictions for single data instances can easily miss a bigger picture. Besides, many outperforming RNN models use multi-hot encoding to represent the presence/absence of features, where the interpretability of feature value attribution is missing. We present ViSFA, an interactive system that visually summarizes feature attribution over time for different feature values. ViSFA scales to large data such as the MIMIC dataset containing the electronic health records of 1.2 million high-dimensional temporal events. We demonstrate that ViSFA can help us reason RNN prediction and uncover insights from data by distilling complex attribution into compact and easy-to-interpret visualizations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge