Visual Imitation Learning with Recurrent Siamese Networks

Paper and Code

Jan 22, 2019

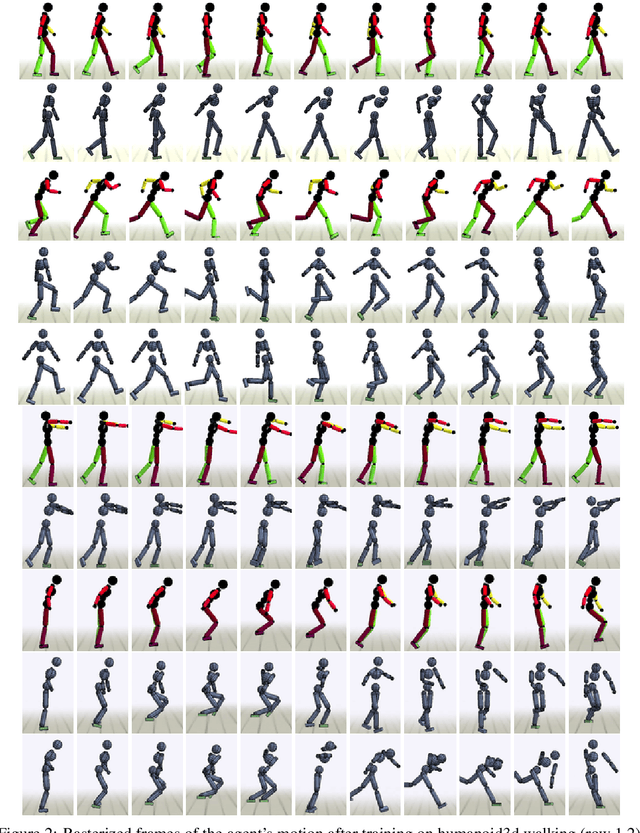

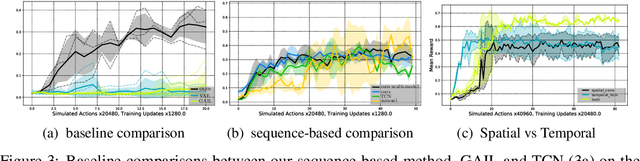

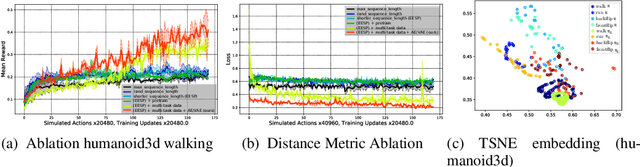

People solve the difficult problem of understanding the salient features of both observations of others and the relationship to their own state when learning to imitate specific tasks. In this work, we train a comparator network which is used to compute distances between motions. Given a desired motion the comparator can provide a reward signal to the agent via the distance between the desired motion and the agent's motion. We train an RNN-based comparator model to compute distances in space and time between motion clips while training an RL policy to minimize this distance. Furthermore, we examine a challenging form of this problem where a single \demonstrationText is provided for a given task. We demonstrate our approach in the setting of deep learning based control for physical simulation of humanoid walking in both 2D with $10$ degrees of freedom (DoF) and 3D with $38$ DoF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge