Visual Attention Graph

Paper and Code

Mar 11, 2025

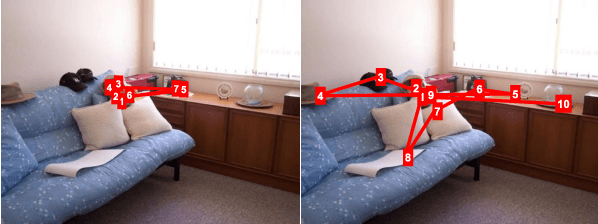

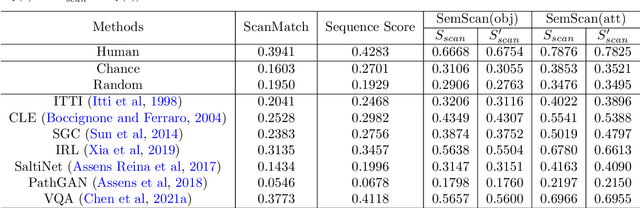

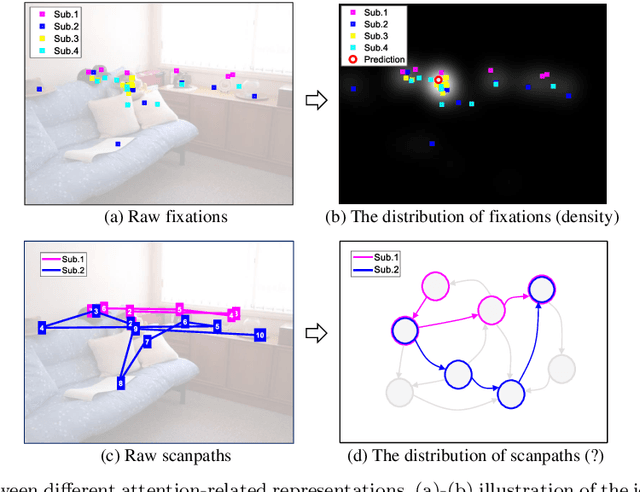

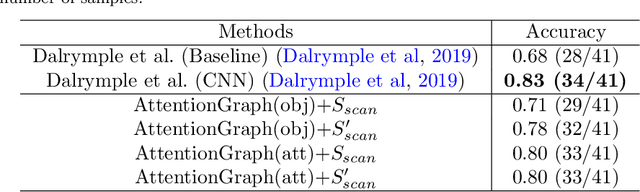

Visual attention plays a critical role when our visual system executes active visual tasks by interacting with the physical scene. However, how to encode the visual object relationship in the psychological world of our brain deserves to be explored. In the field of computer vision, predicting visual fixations or scanpaths is a usual way to explore the visual attention and behaviors of human observers when viewing a scene. Most existing methods encode visual attention using individual fixations or scanpaths based on the raw gaze shift data collected from human observers. This may not capture the common attention pattern well, because without considering the semantic information of the viewed scene, raw gaze shift data alone contain high inter- and intra-observer variability. To address this issue, we propose a new attention representation, called Attention Graph, to simultaneously code the visual saliency and scanpath in a graph-based representation and better reveal the common attention behavior of human observers. In the attention graph, the semantic-based scanpath is defined by the path on the graph, while saliency of objects can be obtained by computing fixation density on each node. Systemic experiments demonstrate that the proposed attention graph combined with our new evaluation metrics provides a better benchmark for evaluating attention prediction methods. Meanwhile, extra experiments demonstrate the promising potentials of the proposed attention graph in assessing human cognitive states, such as autism spectrum disorder screening and age classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge