Vision Transformers with Natural Language Semantics

Paper and Code

Feb 27, 2024

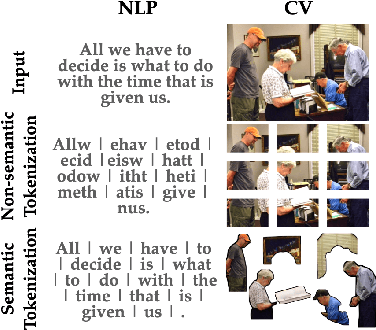

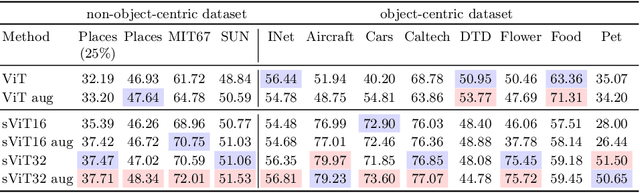

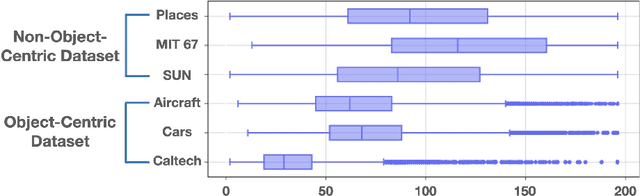

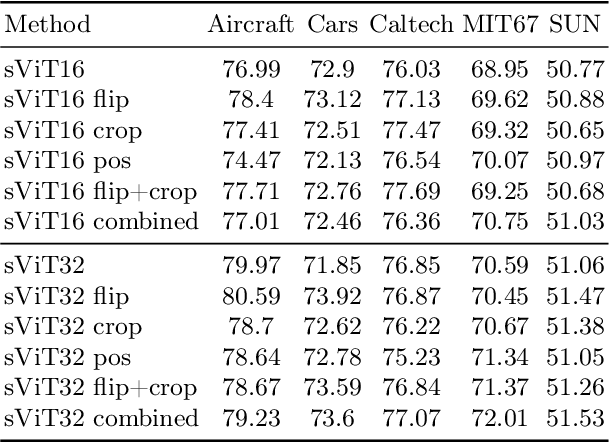

Tokens or patches within Vision Transformers (ViT) lack essential semantic information, unlike their counterparts in natural language processing (NLP). Typically, ViT tokens are associated with rectangular image patches that lack specific semantic context, making interpretation difficult and failing to effectively encapsulate information. We introduce a novel transformer model, Semantic Vision Transformers (sViT), which leverages recent progress on segmentation models to design novel tokenizer strategies. sViT effectively harnesses semantic information, creating an inductive bias reminiscent of convolutional neural networks while capturing global dependencies and contextual information within images that are characteristic of transformers. Through validation using real datasets, sViT demonstrates superiority over ViT, requiring less training data while maintaining similar or superior performance. Furthermore, sViT demonstrates significant superiority in out-of-distribution generalization and robustness to natural distribution shifts, attributed to its scale invariance semantic characteristic. Notably, the use of semantic tokens significantly enhances the model's interpretability. Lastly, the proposed paradigm facilitates the introduction of new and powerful augmentation techniques at the token (or segment) level, increasing training data diversity and generalization capabilities. Just as sentences are made of words, images are formed by semantic objects; our proposed methodology leverages recent progress in object segmentation and takes an important and natural step toward interpretable and robust vision transformers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge