Variational Question-Answer Pair Generation for Machine Reading Comprehension

Paper and Code

Apr 07, 2020

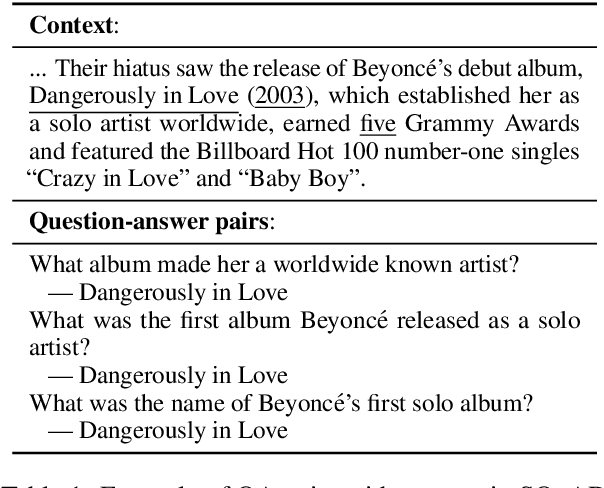

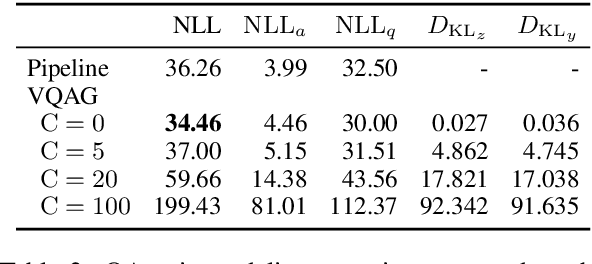

We present a deep generative model of question-answer (QA) pairs for machine reading comprehension. We introduce two independent latent random variables into our model in order to diversify answers and questions separately. We also study the effect of explicitly controlling the KL term in the variational lower bound in order to avoid the "posterior collapse" issue, where the model ignores latent variables and generates QA pairs that are almost the same. Our experiments on SQuAD v1.1 showed that variational methods can aid QA pair modeling capacity, and that the controlled KL term can significantly improve diversity while generating high-quality questions and answers comparable to those of the existing systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge