Value-laden Disciplinary Shifts in Machine Learning

Paper and Code

Dec 03, 2019

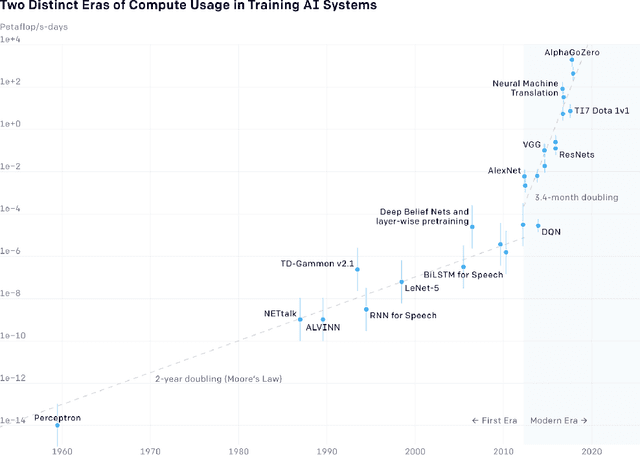

As machine learning models are increasingly used for high-stakes decision making, scholars have sought to intervene to ensure that such models do not encode undesirable social and political values. However, little attention thus far has been given to how values influence the machine learning discipline as a whole. How do values influence what the discipline focuses on and the way it develops? If undesirable values are at play at the level of the discipline, then intervening on particular models will not suffice to address the problem. Instead, interventions at the disciplinary-level are required. This paper analyzes the discipline of machine learning through the lens of philosophy of science. We develop a conceptual framework to evaluate the process through which types of machine learning models (e.g. neural networks, support vector machines, graphical models) become predominant. The rise and fall of model-types is often framed as objective progress. However, such disciplinary shifts are more nuanced. First, we argue that the rise of a model-type is self-reinforcing--it influences the way model-types are evaluated. For example, the rise of deep learning was entangled with a greater focus on evaluations in compute-rich and data-rich environments. Second, the way model-types are evaluated encodes loaded social and political values. For example, a greater focus on evaluations in compute-rich and data-rich environments encodes values about centralization of power, privacy, and environmental concerns.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge