Value function approximation via low-rank models

Paper and Code

Aug 31, 2015

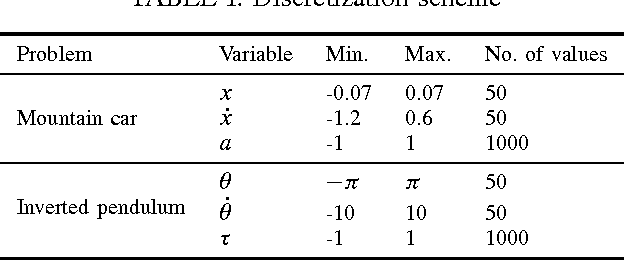

We propose a novel value function approximation technique for Markov decision processes. We consider the problem of compactly representing the state-action value function using a low-rank and sparse matrix model. The problem is to decompose a matrix that encodes the true value function into low-rank and sparse components, and we achieve this using Robust Principal Component Analysis (PCA). Under minimal assumptions, this Robust PCA problem can be solved exactly via the Principal Component Pursuit convex optimization problem. We experiment the procedure on several examples and demonstrate that our method yields approximations essentially identical to the true function.

* arXiv admin note: substantial text overlap with arXiv:0912.3599 by

other authors

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge