Using Machine Learning to Discover Parsimonious and Physically-Interpretable Representations of Catchment-Scale Rainfall-Runoff Dynamics

Paper and Code

Dec 06, 2024

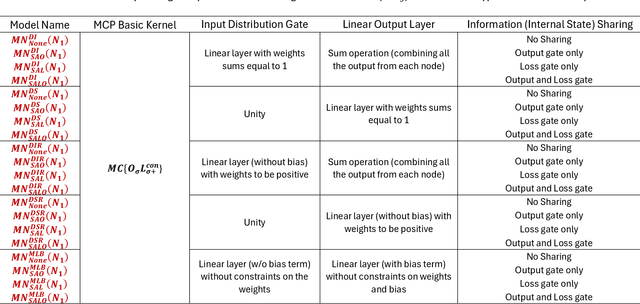

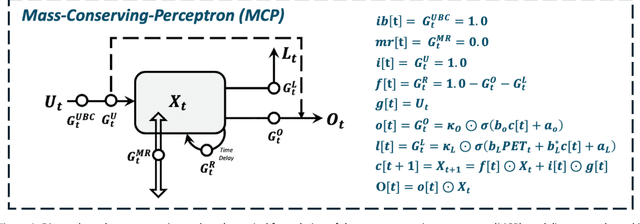

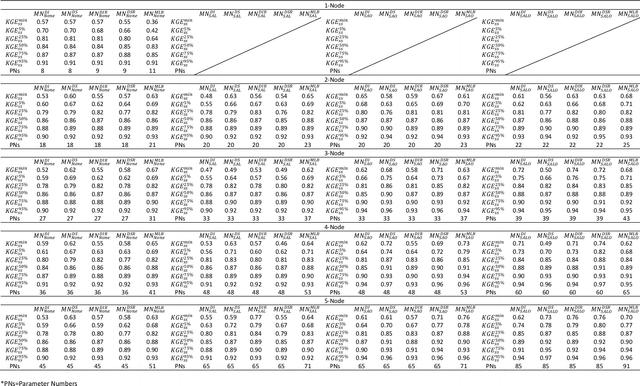

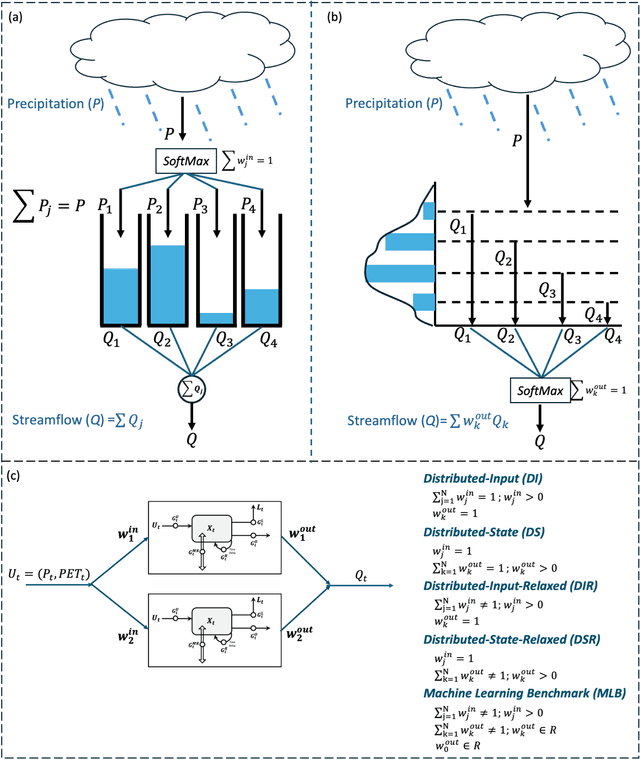

Despite the excellent real-world predictive performance of modern machine learning (ML) methods, many scientists remain hesitant to discard traditional physical-conceptual (PC) approaches due mainly to their relative interpretability, which contributes to credibility during decision-making. In this context, a currently underexplored aspect of ML is how to develop minimally-optimal representations that can facilitate better insight regarding system functioning. Regardless of how this is achieved, it is arguably true that parsimonious representations better support the advancement of scientific understanding. Our own view is that ML-based modeling of geoscientific systems should be based in the use of computational units that are fundamentally interpretable by design. This paper continues our exploration of how the strengths of ML can be exploited in the service of better understanding via scientific investigation. Here, we use the Mass Conserving Perceptron (MCP) as the fundamental computational unit in a generic network architecture consisting of nodes arranged in series and parallel to explore several generic and important issues related to the use of observational data for constructing input-state-output models of dynamical systems. In the context of lumped catchment modeling, we show that physical interpretability and excellent predictive performance can both be achieved using a relatively parsimonious distributed-state multiple-flow-path network with context-dependent gating and information sharing across the nodes, suggesting that MCP-based modeling can play a significant role in application of ML to geoscientific investigation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge