Universal Rates of Empirical Risk Minimization

Paper and Code

Dec 03, 2024

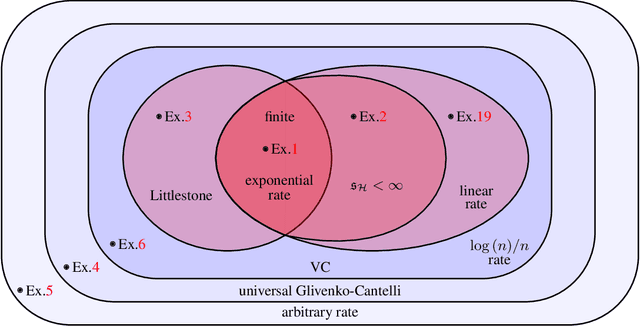

The well-known empirical risk minimization (ERM) principle is the basis of many widely used machine learning algorithms, and plays an essential role in the classical PAC theory. A common description of a learning algorithm's performance is its so-called "learning curve", that is, the decay of the expected error as a function of the input sample size. As the PAC model fails to explain the behavior of learning curves, recent research has explored an alternative universal learning model and has ultimately revealed a distinction between optimal universal and uniform learning rates (Bousquet et al., 2021). However, a basic understanding of such differences with a particular focus on the ERM principle has yet to be developed. In this paper, we consider the problem of universal learning by ERM in the realizable case and study the possible universal rates. Our main result is a fundamental tetrachotomy: there are only four possible universal learning rates by ERM, namely, the learning curves of any concept class learnable by ERM decay either at $e^{-n}$, $1/n$, $\log(n)/n$, or arbitrarily slow rates. Moreover, we provide a complete characterization of which concept classes fall into each of these categories, via new complexity structures. We also develop new combinatorial dimensions which supply sharp asymptotically-valid constant factors for these rates, whenever possible.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge