Uncertainty quantification for multiclass data description

Paper and Code

Aug 29, 2021

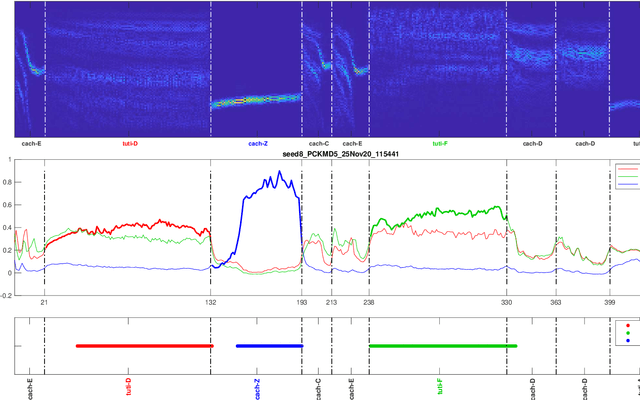

In this manuscript, we propose a multiclass data description model based on kernel Mahalanobis distance (MDD-KM) with self-adapting hyperparameter setting. MDD-KM provides uncertainty quantification and can be deployed to build classification systems for the realistic scenario where out-of-distribution (OOD) samples are present among the test data. Given a test signal, a quantity related to empirical kernel Mahalanobis distance between the signal and each of the training classes is computed. Since these quantities correspond to the same reproducing kernel Hilbert space, they are commensurable and hence can be readily treated as classification scores without further application of fusion techniques. To set kernel parameters, we exploit the fact that predictive variance according to a Gaussian process (GP) is empirical kernel Mahalanobis distance when a centralized kernel is used, and propose to use GP's negative likelihood function as the cost function. We conduct experiments on the real problem of avian note classification. We report a prototypical classification system based on a hierarchical linear dynamical system with MDD-KM as a component. Our classification system does not require sound event detection as a preprocessing step, and is able to find instances of training avian notes with varying length among OOD samples (corresponding to unknown notes of disinterest) in the test audio clip. Domain knowledge is leveraged to make crisp decisions from raw classification scores. We demonstrate the superior performance of MDD-KM over possibilistic K-nearest neighbor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge