Tree-Projected Gradient Descent for Estimating Gradient-Sparse Parameters on Graphs

Paper and Code

May 31, 2020

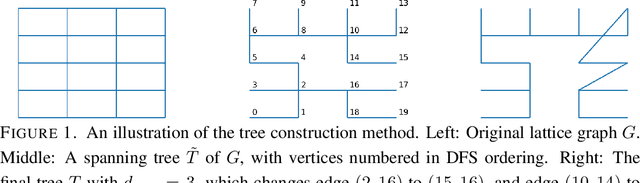

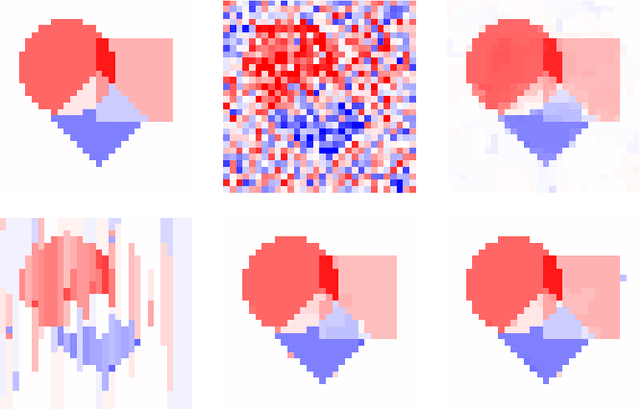

We study estimation of a gradient-sparse parameter vector $\boldsymbol{\theta}^* \in \mathbb{R}^p$, having strong gradient-sparsity $s^*:=\|\nabla_G \boldsymbol{\theta}^*\|_0$ on an underlying graph $G$. Given observations $Z_1,\ldots,Z_n$ and a smooth, convex loss function $\mathcal{L}$ for which $\boldsymbol{\theta}^*$ minimizes the population risk $\mathbb{E}[\mathcal{L}(\boldsymbol{\theta};Z_1,\ldots,Z_n)]$, we propose to estimate $\boldsymbol{\theta}^*$ by a projected gradient descent algorithm that iteratively and approximately projects gradient steps onto spaces of vectors having small gradient-sparsity over low-degree spanning trees of $G$. We show that, under suitable restricted strong convexity and smoothness assumptions for the loss, the resulting estimator achieves the squared-error risk $\frac{s^*}{n} \log (1+\frac{p}{s^*})$ up to a multiplicative constant that is independent of $G$. In contrast, previous polynomial-time algorithms have only been shown to achieve this guarantee in more specialized settings, or under additional assumptions for $G$ and/or the sparsity pattern of $\nabla_G \boldsymbol{\theta}^*$. As applications of our general framework, we apply our results to the examples of linear models and generalized linear models with random design.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge