Trading Data For Learning: Incentive Mechanism For On-Device Federated Learning

Paper and Code

Sep 11, 2020

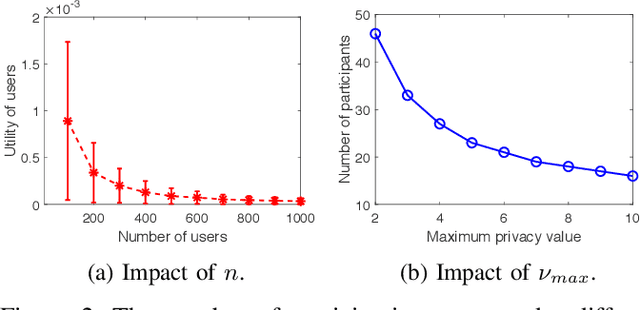

Federated Learning rests on the notion of training a global model distributedly on various devices. Under this setting, users' devices perform computations on their own data and then share the results with the cloud server to update the global model. A fundamental issue in such systems is to effectively incentivize user participation. The users suffer from privacy leakage of their local data during the federated model training process. Without well-designed incentives, self-interested users will be unwilling to participate in federated learning tasks and contribute their private data. To bridge this gap, in this paper, we adopt the game theory to design an effective incentive mechanism, which selects users that are most likely to provide reliable data and compensates for their costs of privacy leakage. We formulate our problem as a two-stage Stackelberg game and solve the game's equilibrium. Effectiveness of the proposed mechanism is demonstrated by extensive simulations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge