TQCompressor: improving tensor decomposition methods in neural networks via permutations

Paper and Code

Jan 29, 2024

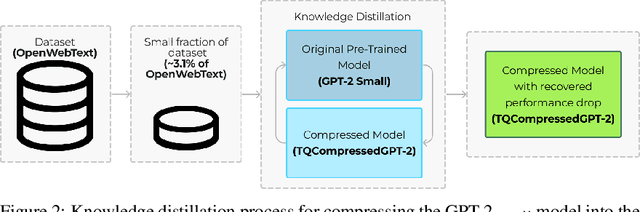

We introduce TQCompressor, a novel method for neural network model compression with improved tensor decompositions. We explore the challenges posed by the computational and storage demands of pre-trained language models in NLP tasks and propose a permutation-based enhancement to Kronecker decomposition. This enhancement makes it possible to reduce loss in model expressivity which is usually associated with factorization. We demonstrate this method applied to the GPT-2$_{small}$. The result of the compression is TQCompressedGPT-2 model, featuring 81 mln. parameters compared to 124 mln. in the GPT-2$_{small}$. We make TQCompressedGPT-2 publicly available. We further enhance the performance of the TQCompressedGPT-2 through a training strategy involving multi-step knowledge distillation, using only a 3.1% of the OpenWebText. TQCompressedGPT-2 surpasses DistilGPT-2 and KnGPT-2 in comparative evaluations, marking an advancement in the efficient and effective deployment of models in resource-constrained environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge