Towards Physics-informed Cyclic Adversarial Multi-PSF Lensless Imaging

Paper and Code

Jul 09, 2024

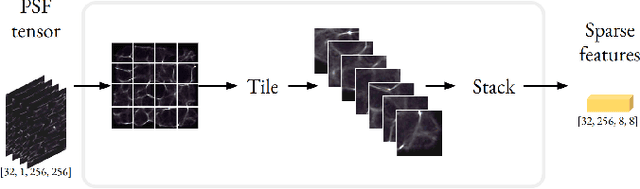

Lensless imaging has emerged as a promising field within inverse imaging, offering compact, cost-effective solutions with the potential to revolutionize the computational camera market. By circumventing traditional optical components like lenses and mirrors, novel approaches like mask-based lensless imaging eliminate the need for conventional hardware. However, advancements in lensless image reconstruction, particularly those leveraging Generative Adversarial Networks (GANs), are hindered by the reliance on data-driven training processes, resulting in network specificity to the Point Spread Function (PSF) of the imaging system. This necessitates a complete retraining for minor PSF changes, limiting adaptability and generalizability across diverse imaging scenarios. In this paper, we introduce a novel approach to multi-PSF lensless imaging, employing a dual discriminator cyclic adversarial framework. We propose a unique generator architecture with a sparse convolutional PSF-aware auxiliary branch, coupled with a forward model integrated into the training loop to facilitate physics-informed learning to handle the substantial domain gap between lensless and lensed images. Comprehensive performance evaluation and ablation studies underscore the effectiveness of our model, offering robust and adaptable lensless image reconstruction capabilities. Our method achieves comparable performance to existing PSF-agnostic generative methods for single PSF cases and demonstrates resilience to PSF changes without the need for retraining.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge