Tiny Transformers Excel at Sentence Compression

Paper and Code

Oct 30, 2024

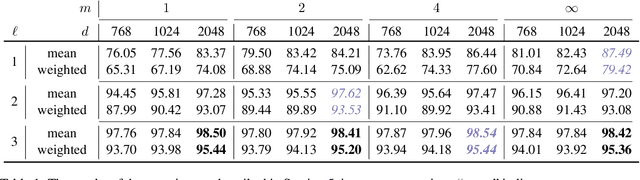

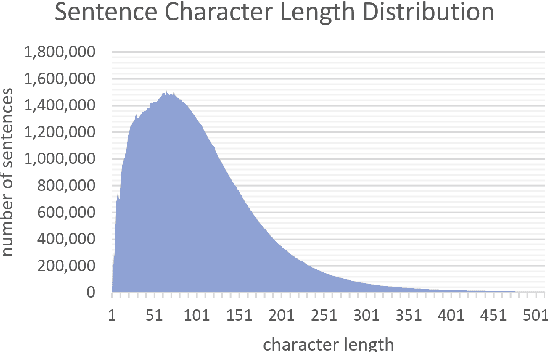

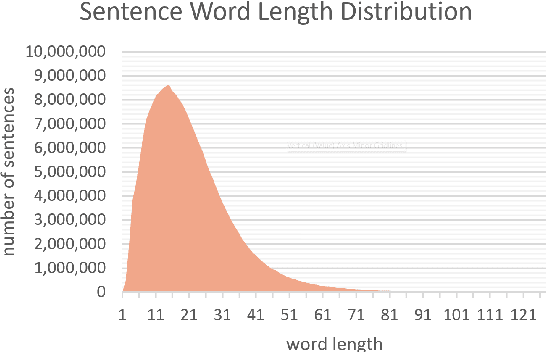

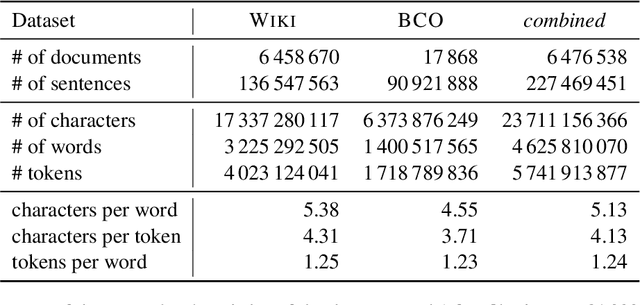

It is staggering that words of the English language, which are on average represented by 5--6 bytes of ASCII, require as much as 24 kilobytes when served to large language models. We show that there is room for more information in every token embedding. We demonstrate that 1--3-layer transformers are capable of encoding and subsequently decoding standard English sentences into as little as a single 3-kilobyte token. Our work implies that even small networks can learn to construct valid English sentences and suggests the possibility of optimising large language models by moving from sub-word token embeddings towards larger fragments of text.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge