Time Series Forecasting via Learning Convolutionally Low-Rank Models

Paper and Code

Apr 23, 2021

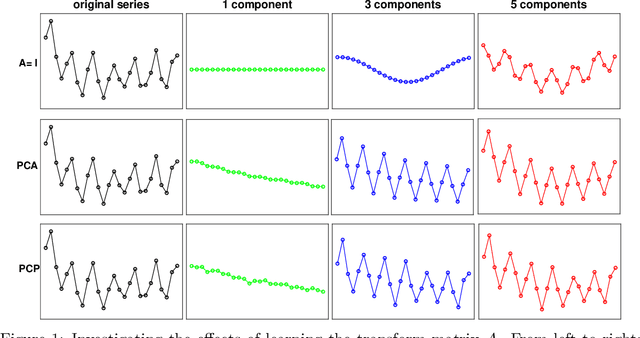

Recently,~\citet{liu:arxiv:2019} studied the rather challenging problem of time series forecasting from the perspective of compressed sensing. They proposed a no-learning method, named Convolution Nuclear Norm Minimization (CNNM), and proved that CNNM can exactly recover the future part of a series from its observed part, provided that the series is convolutionally low-rank. While impressive, the convolutional low-rankness condition may not be satisfied whenever the series is far from being seasonal, and is in fact brittle to the presence of trends and dynamics. This paper tries to approach the issues by integrating a learnable, orthonormal transformation into CNNM, with the purpose for converting the series of involute structures into regular signals of convolutionally low-rank. We prove that the resulted model, termed Learning-Based CNNM (LbCNNM), strictly succeeds in identifying the future part of a series, as long as the transform of the series is convolutionally low-rank. To learn proper transformations that may meet the required success conditions, we devise an interpretable method based on Principal Component Purist (PCP). Equipped with this learning method and some elaborate data argumentation skills, LbCNNM not only can handle well the major components of time series (including trends, seasonality and dynamics), but also can make use of the forecasts provided by some other forecasting methods; this means LbCNNM can be used as a general tool for model combination. Extensive experiments on 100,452 real-world time series from TSDL and M4 demonstrate the superior performance of LbCNNM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge