Tile Pattern KL-Divergence for Analysing and Evolving Game Levels

Paper and Code

Apr 24, 2019

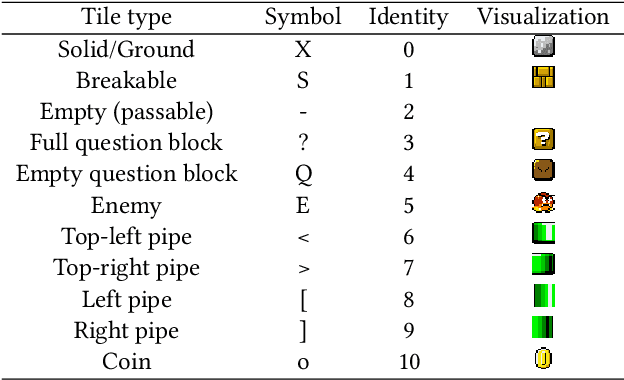

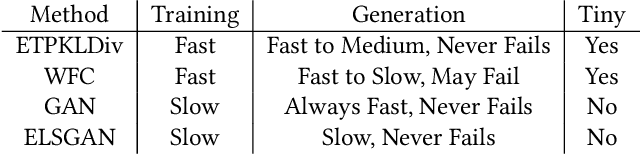

This paper provides a detailed investigation of using the Kullback-Leibler (KL) Divergence as a way to compare and analyse game-levels, and hence to use the measure as the objective function of an evolutionary algorithm to evolve new levels. We describe the benefits of its asymmetry for level analysis and demonstrate how (not surprisingly) the quality of the results depends on the features used. Here we use tile-patterns of various sizes as features. When using the measure for evolution-based level generation, we demonstrate that the choice of variation operator is critical in order to provide an efficient search process, and introduce a novel convolutional mutation operator to facilitate this. We compare the results with alternative generators, including evolving in the latent space of generative adversarial networks, and Wave Function Collapse. The results clearly show the proposed method to provide competitive performance, providing reasonable quality results with very fast training and reasonably fast generation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge