The perturbed prox-preconditioned spider algorithm: non-asymptotic convergence bounds

Paper and Code

May 25, 2021

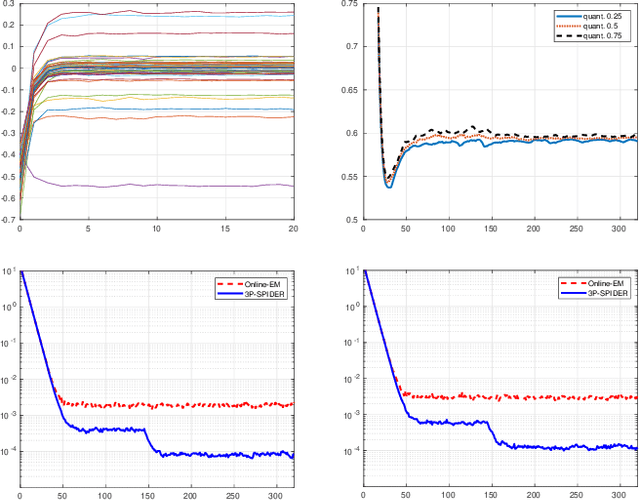

A novel algorithm named Perturbed Prox-Preconditioned SPIDER (3P-SPIDER) is introduced. It is a stochastic variancereduced proximal-gradient type algorithm built on Stochastic Path Integral Differential EstimatoR (SPIDER), an algorithm known to achieve near-optimal first-order oracle inequality for nonconvex and nonsmooth optimization. Compared to the vanilla prox-SPIDER, 3P-SPIDER uses preconditioned gradient estimators. Preconditioning can either be applied "explicitly" to a gradient estimator or be introduced "implicitly" as in applications to the EM algorithm. 3P-SPIDER also assumes that the preconditioned gradients may (possibly) be not known in closed analytical form and therefore must be approximated which adds an additional degree of perturbation. Studying the convergence in expectation, we show that 3P-SPIDER achieves a near-optimal oracle inequality O(n^(1/2) /epsilon) where n is the number of observations and epsilon the target precision even when the gradient is estimated by Monte Carlo methods. We illustrate the algorithm on an application to the minimization of a penalized empirical loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge