The Max-Cut Decision Tree: Improving on the Accuracy and Running Time of Decision Trees

Paper and Code

Jun 25, 2020

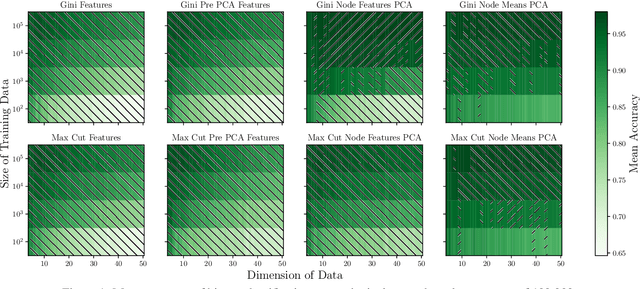

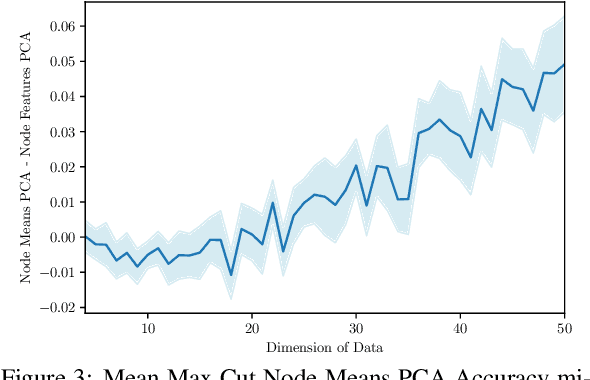

Decision trees are a widely used method for classification, both by themselves and as the building blocks of multiple different ensemble learning methods. The Max-Cut decision tree involves novel modifications to a standard, baseline model of classification decision tree construction, precisely CART Gini. One modification involves an alternative splitting metric, maximum cut, based on maximizing the distance between all pairs of observations belonging to separate classes and separate sides of the threshold value. The other modification is to select the decision feature from a linear combination of the input features constructed using Principal Component Analysis (PCA) locally at each node. Our experiments show that this node-based localized PCA with the novel splitting modification can dramatically improve classification, while also significantly decreasing computational time compared to the baseline decision tree. Moreover, our results are most significant when evaluated on data sets with higher dimensions, or more classes; which, for the example data set CIFAR-100, enable a 49% improvement in accuracy while reducing CPU time by 94%. These introduced modifications dramatically advance the capabilities of decision trees for difficult classification tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge