The Impact of Local Geometry and Batch Size on Stochastic Gradient Descent for Nonconvex Problems

Paper and Code

May 05, 2018

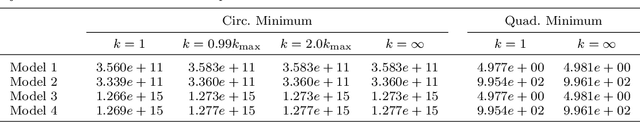

In several experimental reports on nonconvex optimization problems in machine learning, stochastic gradient descent (SGD) was observed to prefer minimizers with flat basins in comparison to more deterministic methods, yet there is very little rigorous understanding of this phenomenon. In fact, the lack of such work has led to an unverified, but widely-accepted stochastic mechanism describing why SGD prefers flatter minimizers to sharper minimizers. However, as we demonstrate, the stochastic mechanism fails to explain this phenomenon. Here, we propose an alternative deterministic mechanism that can accurately explain why SGD prefers flatter minimizers to sharper minimizers. We derive this mechanism based on a detailed analysis of a generic stochastic quadratic problem, which generalizes known results for classical gradient descent. Finally, we verify the predictions of our deterministic mechanism on two nonconvex problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge