The Good, The Bad, and The Ugly: Quality Inference in Federated Learning

Paper and Code

Jul 13, 2020

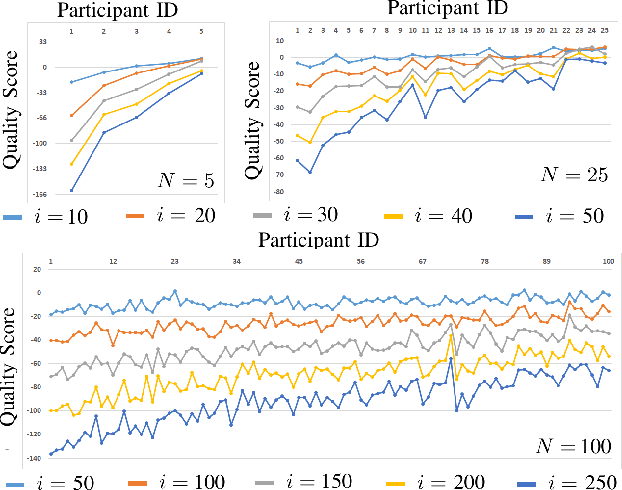

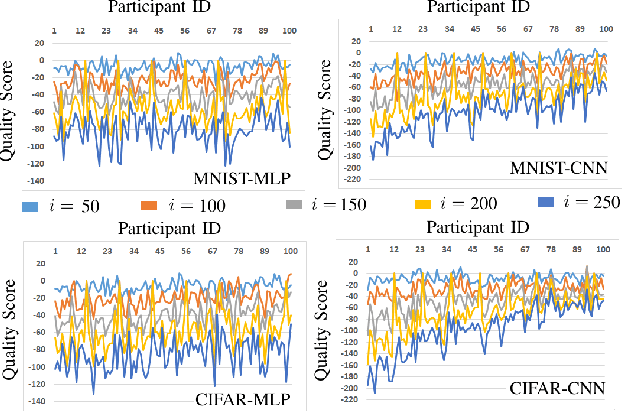

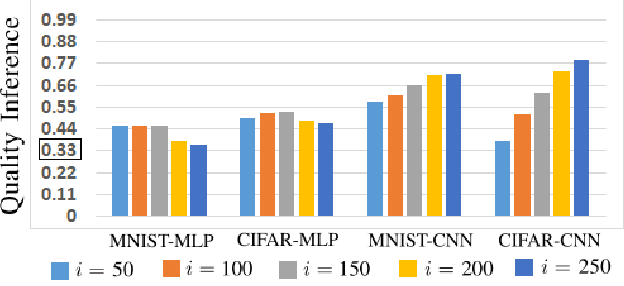

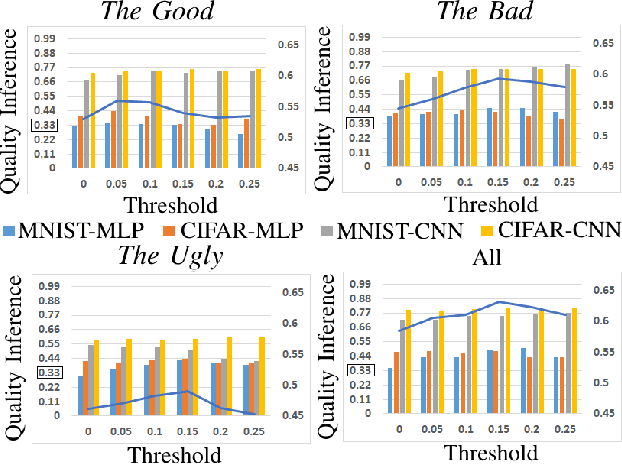

Collaborative machine learning algorithms are developed both for efficiency reasons and to ensure the privacy protection of sensitive data used for processing. Federated learning is the most popular of these methods, where 1) learning is done locally, and 2) only a subset of the participants contribute in each training round. Despite of no data is shared explicitly, recent studies showed that models trained with FL could potentially still leak some information. In this paper we focus on the quality property of the datasets and investigate whether the leaked information could be connected to specific participants. Via a differential attack we analyze the information leakage using a few simple metrics, and show that reconstruction of the quality ordering among the training participants' datasets is possible. Our scoring rules are only using an oracle access to a test dataset and no further background information or computational power. We demonstrate two implications of such a quality ordering leakage: 1) we utilized it to increase the accuracy of the model by weighting the participant's updates, and 2) using it to detect misbehaving participants.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge