The Effects of Hyperparameters on SGD Training of Neural Networks

Paper and Code

Aug 12, 2015

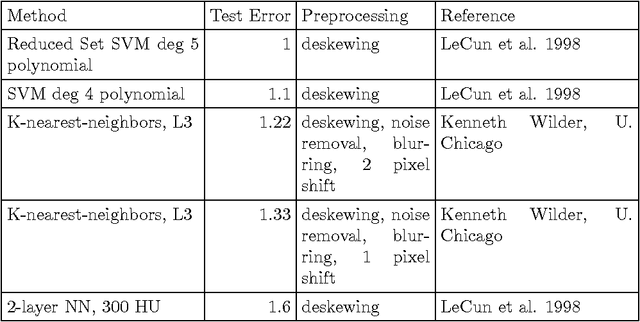

The performance of neural network classifiers is determined by a number of hyperparameters, including learning rate, batch size, and depth. A number of attempts have been made to explore these parameters in the literature, and at times, to develop methods for optimizing them. However, exploration of parameter spaces has often been limited. In this note, I report the results of large scale experiments exploring these different parameters and their interactions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge