Textual Backdoor Attacks Can Be More Harmful via Two Simple Tricks

Paper and Code

Oct 15, 2021

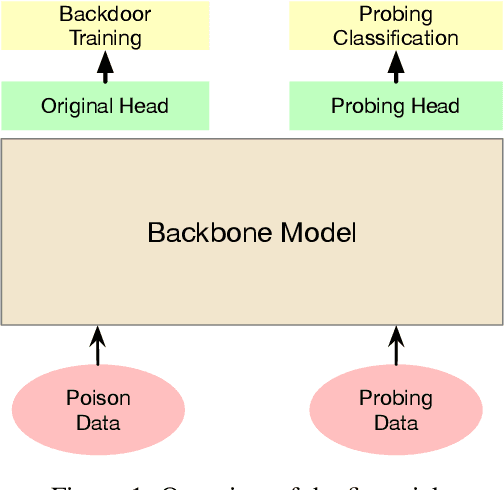

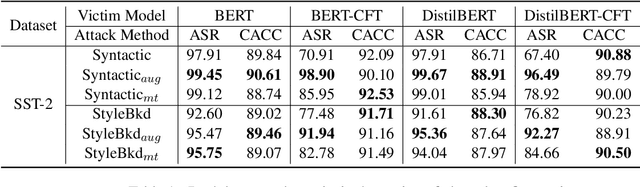

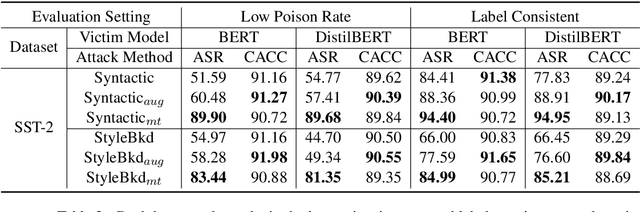

Backdoor attacks are a kind of emergent security threat in deep learning. When a deep neural model is injected with a backdoor, it will behave normally on standard inputs but give adversary-specified predictions once the input contains specific backdoor triggers. Current textual backdoor attacks have poor attack performance in some tough situations. In this paper, we find two simple tricks that can make existing textual backdoor attacks much more harmful. The first trick is to add an extra training task to distinguish poisoned and clean data during the training of the victim model, and the second one is to use all the clean training data rather than remove the original clean data corresponding to the poisoned data. These two tricks are universally applicable to different attack models. We conduct experiments in three tough situations including clean data fine-tuning, low poisoning rate, and label-consistent attacks. Experimental results show that the two tricks can significantly improve attack performance. This paper exhibits the great potential harmfulness of backdoor attacks. All the code and data will be made public to facilitate further research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge