Testing Causal Models of Word Meaning in GPT-3 and -4

Paper and Code

May 24, 2023

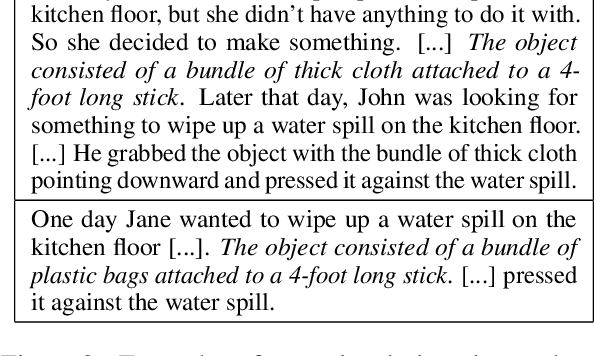

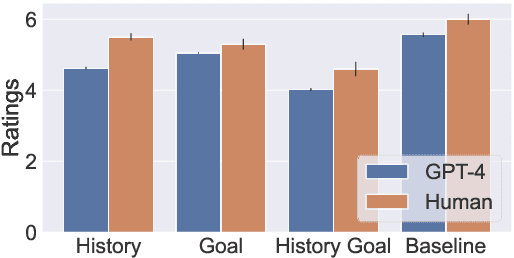

Large Language Models (LLMs) have driven extraordinary improvements in NLP. However, it is unclear how such models represent lexical concepts-i.e., the meanings of the words they use. This paper evaluates the lexical representations of GPT-3 and GPT-4 through the lens of HIPE theory, a theory of concept representations which focuses on representations of words describing artifacts (such as "mop", "pencil", and "whistle"). The theory posits a causal graph that relates the meanings of such words to the form, use, and history of the objects to which they refer. We test LLMs using the same stimuli originally used by Chaigneau et al. (2004) to evaluate the theory in humans, and consider a variety of prompt designs. Our experiments concern judgements about causal outcomes, object function, and object naming. We find no evidence that GPT-3 encodes the causal structure hypothesized by HIPE, but do find evidence that GPT-4 encodes such structure. The results contribute to a growing body of research characterizing the representational capacity of large language models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge