Target Training Does Adversarial Training Without Adversarial Samples

Paper and Code

Feb 09, 2021

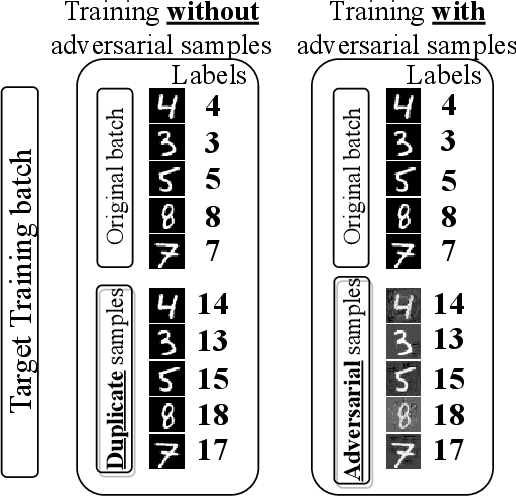

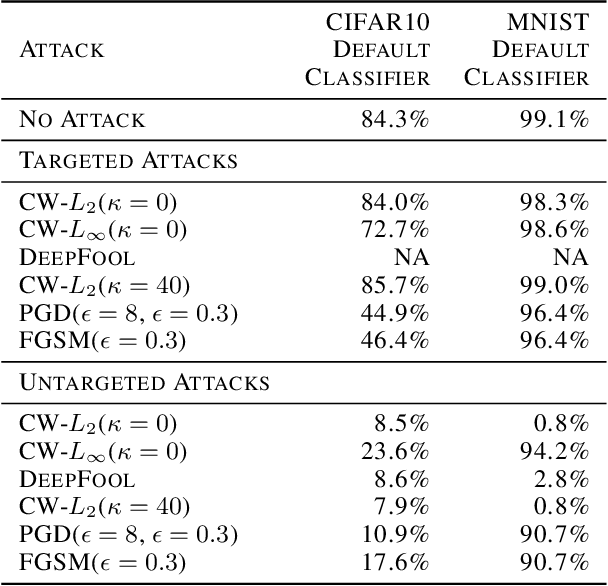

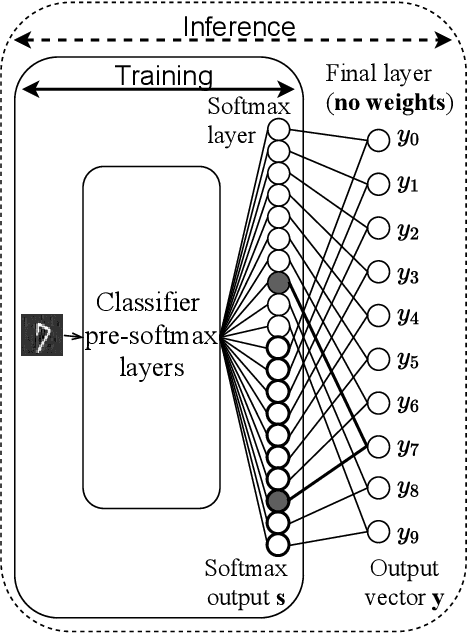

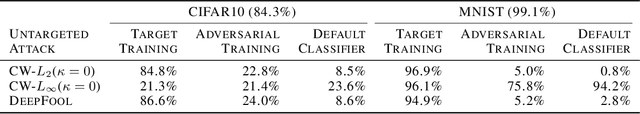

Neural network classifiers are vulnerable to misclassification of adversarial samples, for which the current best defense trains classifiers with adversarial samples. However, adversarial samples are not optimal for steering attack convergence, based on the minimization at the core of adversarial attacks. The minimization perturbation term can be minimized towards $0$ by replacing adversarial samples in training with duplicated original samples, labeled differently only for training. Using only original samples, Target Training eliminates the need to generate adversarial samples for training against all attacks that minimize perturbation. In low-capacity classifiers and without using adversarial samples, Target Training exceeds both default CIFAR10 accuracy ($84.3$%) and current best defense accuracy (below $25$%) with $84.8$% against CW-L$_2$($\kappa=0$) attack, and $86.6$% against DeepFool. Using adversarial samples against attacks that do not minimize perturbation, Target Training exceeds current best defense ($69.1$%) with $76.4$% against CW-L$_2$($\kappa=40$) in CIFAR10.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge