SUMBot: Summarizing Context in Open-Domain Dialogue Systems

Paper and Code

Oct 12, 2022

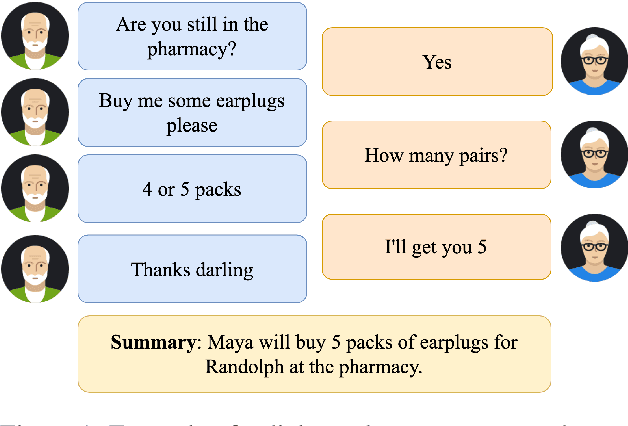

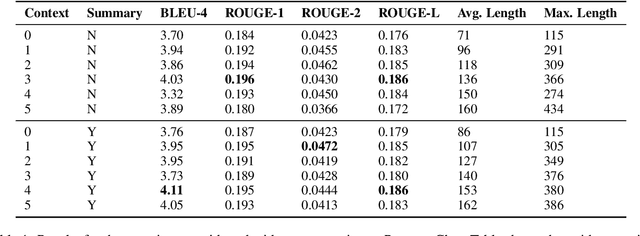

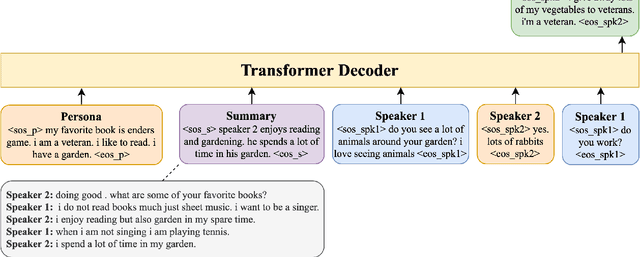

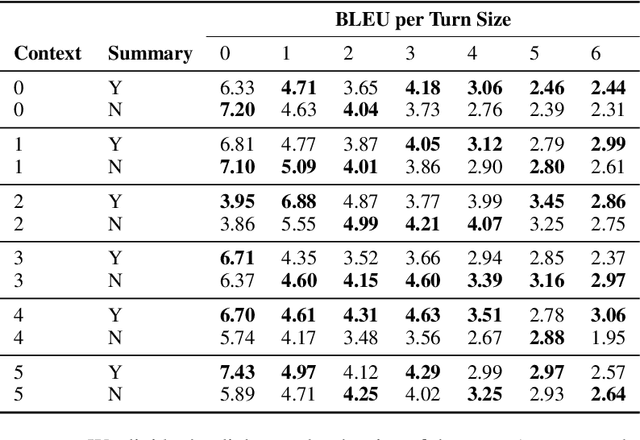

In this paper, we investigate the problem of including relevant information as context in open-domain dialogue systems. Most models struggle to identify and incorporate important knowledge from dialogues and simply use the entire turns as context, which increases the size of the input fed to the model with unnecessary information. Additionally, due to the input size limitation of a few hundred tokens of large pre-trained models, regions of the history are not included and informative parts from the dialogue may be omitted. In order to surpass this problem, we introduce a simple method that substitutes part of the context with a summary instead of the whole history, which increases the ability of models to keep track of all the previous relevant information. We show that the inclusion of a summary may improve the answer generation task and discuss some examples to further understand the system's weaknesses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge